Ensuring Reliability: A Guide to Ruggedness and Robustness Testing for Electrochemical Pharmaceutical Methods

This article provides a comprehensive guide for researchers and pharmaceutical development professionals on implementing ruggedness and robustness testing for electrochemical analytical methods.

Ensuring Reliability: A Guide to Ruggedness and Robustness Testing for Electrochemical Pharmaceutical Methods

Abstract

This article provides a comprehensive guide for researchers and pharmaceutical development professionals on implementing ruggedness and robustness testing for electrochemical analytical methods. It covers the foundational definitions and regulatory importance of these tests, explores systematic methodological approaches including Experimental Design (DoE) and the Analytical Quality by Design (AQbD) framework, and offers practical troubleshooting strategies for common challenges. The content also details the integration of these tests into method validation protocols and compares electrochemical techniques with traditional chromatographic methods. By synthesizing current best practices and future trends, this guide aims to equip scientists with the knowledge to develop reliable, reproducible, and defensible electrochemical methods for drug analysis.

The Pillars of Reliability: Defining Ruggedness and Robustness in Electroanalysis

In the rigorous world of analytical chemistry and pharmaceutical development, the reliability of a method is paramount. Two key concepts—robustness and ruggedness—serve as critical indicators of a method's reliability, yet they are frequently confused. Robustness evaluates a method's stability when subjected to small, deliberate variations in its internal parameters, such as pH or temperature [1] [2]. Conversely, ruggedness assesses the reproducibility of analytical results when the method is applied under varying external conditions, such as different analysts, instruments, or laboratories [1] [3]. Within the context of electrochemical and pharmaceutical methods, understanding this distinction is not merely academic; it is a fundamental requirement for ensuring data integrity, facilitating successful technology transfer, and meeting stringent regulatory compliance [4] [5]. This guide provides a detailed, objective comparison of these two validation parameters to support researchers, scientists, and drug development professionals in their work.

Conceptual Breakdown: Internal vs. External Stability

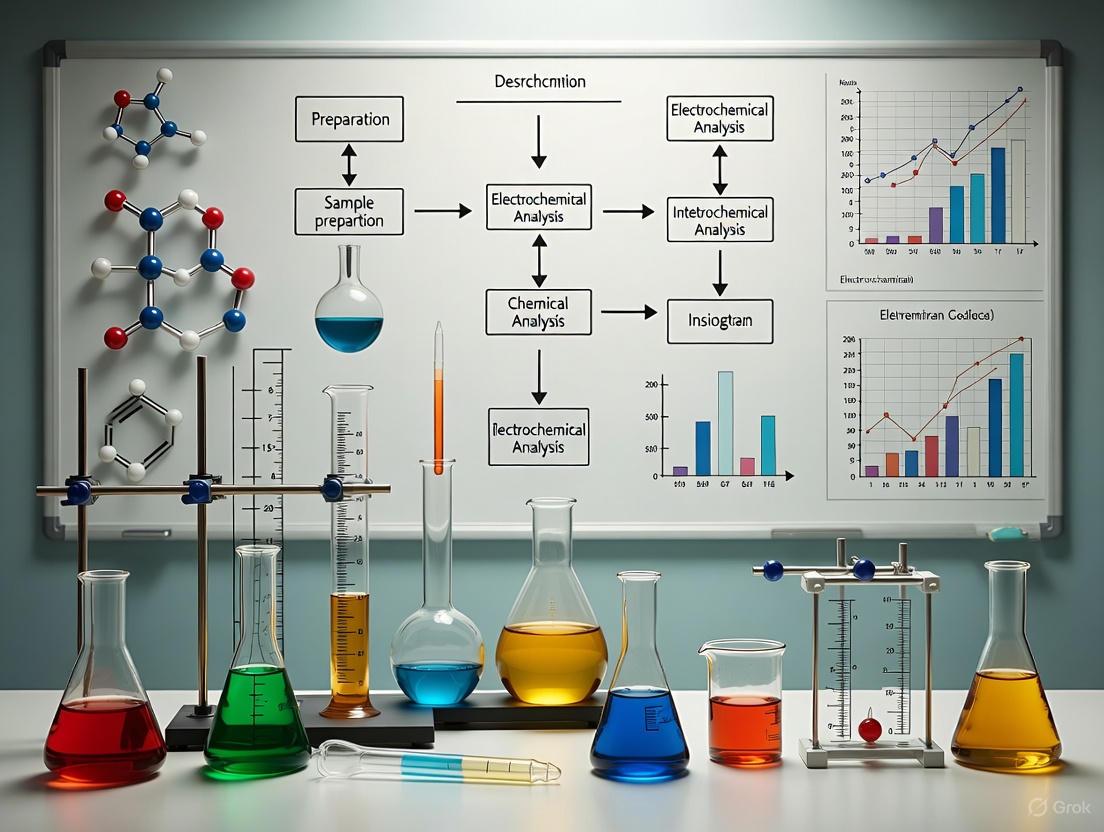

The core distinction between robustness and ruggedness lies in the source and scale of the variations against which a method is tested. The following diagram illustrates the primary factors considered in each type of testing.

Diagram: Key factors evaluated in robustness vs. ruggedness testing. Robustness focuses on internal parameters, while ruggedness assesses external conditions.

Defining Robustness

Robustness is an intra-laboratory study conducted during the method development phase [2]. Its purpose is to identify critical parameters and establish a "method operating space" by determining how susceptible the method is to slight, intentional changes in its defined conditions [1] [5]. A robust method is one that can tolerate the minor fluctuations inevitable in routine laboratory work without producing significantly different results. This is a measure of the method's internal stability [6].

Defining Ruggedness

Ruggedness testing, often conducted later in the validation lifecycle, is a measure of a method's reproducibility across real-world variations [2]. It evaluates the method's performance when exposed to changes in external factors that are not specified in the method protocol, such as the skill of different analysts, instrument models from various manufacturers, or environmental conditions in different laboratories [1] [3]. A rugged method ensures that a product's quality assessment remains consistent across a global supply chain or when methods are transferred to a contract research organization (CRO) [5].

Comparative Analysis: A Side-by-Side Examination

The table below provides a structured, detailed comparison of robustness and ruggedness across several critical dimensions.

| Aspect | Robustness | Ruggedness |

|---|---|---|

| Core Definition | Measures capacity to remain unaffected by small, deliberate variations in method parameters [1] [7]. | Degree of reproducibility of results under a variety of normal, expected external conditions [8]. |

| Type of Variations | Small, controlled changes to internal method parameters (e.g., mobile phase composition ±1%, temperature ±2°C, flow rate ±0.1 mL/min) [1] [2]. | Broader changes in external conditions (e.g., different analysts, instruments, laboratories, reagent lots) [1] [3]. |

| Primary Objective | To identify critical parameters, establish a method's operational range, and ensure reliability during routine use in a single lab [1] [5]. | To ensure the method yields consistent results when applied in different settings, supporting method transfer and multi-site studies [2] [3]. |

| Typical Scope | Narrow, intra-laboratory. Focused on conditions directly affecting the analytical separation or detection [1]. | Broad, often inter-laboratory. Encompasses reproducibility across different environments and users [1] [2]. |

| Regulatory Context | Included in ICH Q2(R1) definition; often investigated during development to inform system suitability tests [7] [8]. | Addressed under "Intermediate Precision" in ICH Q2(R1); the term "ruggedness" is used by the USP [3] [8]. |

| Common Techniques | Primarily used in chromatographic analyses (HPLC, UPLC, GC) and capillary electrophoresis [1] [9]. | Applied across all analytical methods, especially those intended for transfer between QC labs or multi-site manufacturing [3]. |

Experimental Protocols for Testing

A methodical approach to testing is essential for generating meaningful data on robustness and ruggedness. The following workflow outlines a standard protocol for designing and executing these studies.

Diagram: General workflow for designing and executing robustness and ruggedness tests.

Robustness Testing Methodology

A well-designed robustness test uses structured experimental designs to efficiently evaluate multiple factors simultaneously.

Factor Selection: Identify key method parameters suspected of influencing results. For an HPLC method, this typically includes:

Experimental Design: Utilize multivariate screening designs to study several factors in a minimal number of experiments.

- Full Factorial Design: Tests all possible combinations of factors at their high and low levels. Suitable for a small number of factors (e.g., 2^k runs for k factors) [8].

- Fractional Factorial Design: A carefully chosen subset of a full factorial design. Used when the number of factors is larger, as it reduces the number of runs while still estimating main effects [8].

- Plackett-Burman Design: An highly efficient screening design for identifying the most influential factors from a large set. It is particularly useful for ruggedness testing where the goal is to identify critical external factors quickly [8].

Data Analysis: Subject the resulting data (e.g., peak area, retention time, resolution) to statistical analysis. The effects of each parameter variation are calculated, and tools like Analysis of Variance (ANOVA) are used to determine if these effects are statistically significant [3] [8]. The outcome defines the method's tolerance for each parameter.

Ruggedness Testing Methodology

Ruggedness testing often takes the form of an intermediate precision study as defined by ICH Q2(R1) [8].

Factor Selection: The variables tested are external to the method procedure.

- Different analysts with varying skill levels and experience [1] [3]

- Different instruments of the same model or from different manufacturers [2] [5]

- Different laboratories, potentially in different geographic locations [1] [2]

- Different days or weeks to account for temporal variations [1] [3]

- Different batches of critical reagents or columns [3] [5]

Experimental Design: A common approach is to have multiple analysts in one or more laboratories analyze the same homogeneous sample set over different days. The design should allow for the quantification of variance contributed by each of these factors [3].

Data Analysis: The primary metric for evaluation is precision, expressed as the relative standard deviation (RSD). The overall variability observed under these changing conditions (intermediate precision) is compared to the variability under stable conditions (repeatability). A low RSD across all factors demonstrates that the method is rugged [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key materials and solutions whose quality and consistency are critical for successful robustness and ruggedness testing, particularly in chromatographic and electrochemical analyses.

| Item | Function & Importance in Testing |

|---|---|

| HPLC/UPLC Columns (Different Lots) | The stationary phase is critical for separation. Testing different column lots from the same supplier and equivalent columns from different suppliers is a core part of robustness testing to guard against batch-to-batch variability [7] [5]. |

| Chromatographic Reagents & Buffers | The purity and source of solvents, pH modifiers (e.g., trifluoroacetic acid), and buffer salts (e.g., phosphate, acetate) can impact baseline noise, retention time, and peak shape. Testing with reagents from multiple vendors ensures method ruggedness [2] [5]. |

| Reference Standards | Highly characterized materials with known purity and concentration used to calibrate the analytical method. Their stability and consistent quality are non-negotiable for obtaining accurate and reproducible results in both types of tests [5]. |

| Cation Exchange Membranes | In electrochemical methods like electrochemical stripping (ECS) for nutrient recovery, these membranes are key components. Their susceptibility to fouling by organics or salts directly impacts the long-term robustness of the system [4]. |

| Omniphobic Membranes | Specialized membranes used in electrochemical reactors to resist wetting and fouling. Evaluating their performance and lifetime under varying conditions is crucial for assessing the method's robustness over time [4]. |

Robustness and ruggedness are complementary but distinct pillars of a reliable analytical method. Robustness provides the foundational assurance that a method will perform consistently despite minor, inevitable fluctuations in its internal parameters within a single laboratory. Ruggedness confirms that this reliability translates successfully across the broader, real-world variables of different analysts, instruments, and locations. For researchers in electrochemical and pharmaceutical fields, a deliberate and sequential strategy—first establishing robustness during method development, then confirming ruggedness prior to method transfer—is essential for ensuring data integrity, regulatory compliance, and the successful commercialization of safe and effective products.

The Critical Role in Pharmaceutical Quality Control and Regulatory Compliance

In the highly regulated pharmaceutical industry, the quality of analytical data is paramount for ensuring drug safety and efficacy. Ruggedness and robustness testing serve as critical methodological pillars that guarantee the reliability of analytical procedures, particularly as methods are transferred between laboratories and instruments. These tests provide a systematic approach to validate that analytical methods can withstand small, deliberate variations in method parameters without affecting the final results, thereby ensuring regulatory compliance and data integrity throughout the drug development lifecycle.

The International Conference on Harmonisation (ICH) defines robustness as "a measure of its capacity to remain unaffected by small, but deliberate variations in method parameters and provides an indication of its reliability during normal usage" [10] [11]. While often used interchangeably, some authorities like the United States Pharmacopeia (USP) distinguish between these terms, considering ruggedness as the degree of reproducibility under a variety of normal test conditions such as different laboratories, analysts, or instruments [10]. The fundamental objective of these tests is to identify critical method parameters that could potentially impair method performance during routine use and to establish appropriate system suitability test (SST) limits to ensure the analytical procedure remains valid whenever and wherever employed [10] [12].

Theoretical Foundations and Regulatory Framework

Definitions and Distinctions

The concepts of ruggedness and robustness, while closely related, carry nuanced definitions across different regulatory bodies. The ICH guidelines consider these terms as synonyms, defining them as the capacity of an analytical procedure to remain unaffected by small, deliberate variations in method parameters [10] [12]. In contrast, the United States Pharmacopeia (USP) establishes a clearer distinction: it defines robustness as the measure of a method's capacity to remain unaffected by small, deliberate variations in method parameters, while ruggedness refers to the degree of reproducibility of test results obtained by the analysis of the same samples under a variety of normal test conditions [10].

This distinction is significant in pharmaceutical quality control. Ruggedness, per the USP definition, encompasses variations such as different laboratories, different analysts, different instruments, different reagent lots, and different elapsed assay times - essentially equivalent to assessments of intermediate precision or reproducibility [10]. Robustness testing, on the other hand, specifically examines the influence of small, intentional changes to method parameters described in the operating procedure, such as mobile phase pH, column temperature, or flow rate in chromatographic methods [11].

Regulatory Significance and Timing

Although not yet formally required by ICH guidelines, robustness testing is increasingly demanded by regulatory authorities like the US Food and Drug Administration (FDA) for drug registration [10]. The evolution of robustness testing in the pharmaceutical industry has seen a shift in its application timeline. Initially performed at the end of method validation just before interlaboratory studies, robustness testing is now recommended earlier - either at the end of method development or at the beginning of the validation procedure [10] [12]. This strategic shift prevents the costly scenario where a method is found non-robust after extensive validation, requiring redevelopment and revalidation [10].

Regulators emphasize that "one consequence of the evaluation of robustness should be that a series of system suitability parameters (e.g., resolution tests) is established to ensure that the validity of the analytical procedure is maintained whenever used" [10]. This expectation directly links robustness testing with the establishment of meaningful, experimentally justified system suitability test limits rather than arbitrary limits based solely on analyst experience [12].

Methodological Approaches to Ruggedness and Robustness Testing

Experimental Design Strategies

A well-structured robustness test follows a systematic approach with clearly defined steps to ensure comprehensive evaluation of method parameters [12]. The initial phase involves selecting factors and their levels, followed by choosing an appropriate experimental design, defining responses, executing experiments, estimating factor effects, and finally drawing chemically relevant conclusions [11] [12].

For quantitative factors such as mobile phase pH, column temperature, or flow rate in HPLC methods, two extreme levels are typically chosen symmetrically around the nominal level described in the operating procedure [11]. The interval between these levels should slightly exceed the variations expected when transferring the method between laboratories or instruments [12]. In certain cases, asymmetric intervals around the nominal level are recommended, particularly when the response does not change continuously as a function of factor levels, such as absorbance or peak area as a function of detection wavelength when the nominal wavelength is at maximum absorbance [11].

Table 1: Key Steps in Ruggedness and Robustness Testing

| Step | Description | Considerations |

|---|---|---|

| Factor Selection | Choosing method parameters to test | Include operational and environmental factors [12] |

| Level Definition | Setting high/low values for each factor | Intervals should represent expected transfer variations [11] |

| Design Selection | Choosing experimental design structure | Plackett-Burman or fractional factorial designs typically used [11] |

| Response Selection | Identifying outputs to measure | Include both assay and system suitability test responses [11] |

| Experiment Execution | Performing trials according to design | Random sequence or anti-drift sequences recommended [11] |

| Effect Estimation | Calculating factor influences on responses | Difference between average responses at high and low levels [11] |

| Statistical Analysis | Interpreting effect significance | Normal probability plots or statistical tests [11] |

Statistical Evaluation and Data Interpretation

The evaluation of robustness test results employs both graphical and statistical methods to identify significant effects. The effect of each factor (EX) on a response (Y) is calculated as the difference between the average responses when the factor was at its high level and the average responses when it was at its low level [11]. For designs with N experiments, this is mathematically expressed as EX = (ΣY+)/(N/2) - (ΣY-)/(N/2), where Y+ and Y- represent responses at high and low factor levels respectively [11].

The significance of these effects can be evaluated graphically using normal or half-normal probability plots, where non-significant effects tend to fall on a straight line while significant effects deviate from this line [11]. Statistically, effects can be compared to critical effects derived from dummy factors (in Plackett-Burman designs) or from two-factor interactions (in fractional factorial designs) [11]. Alternatively, algorithms like that of Dong can provide statistically derived critical effects at a specified significance level (typically α = 0.05) [11].

Application to Electrochemical Methods in Pharmaceutical Analysis

Unique Considerations for Electrochemical Techniques

While the fundamental principles of ruggedness and robustness testing apply across analytical techniques, electrochemical methods in pharmaceutical analysis present unique challenges and considerations. The operational principles of electrochemical systems, including their basis in oxidation-reduction (redox) processes and thermodynamic relationships, necessitate specialized approaches to robustness testing [13]. Key components such as proton exchange membranes, electrocatalysts, and electrode assemblies introduce specific parameters that must be evaluated for their potential impact on method performance [13].

Electrochemical hydrogen compressors (EHCs), for instance, rely on precise control of multiple interconnected components where the membrane-electrode assembly (MEA) represents a critical functional unit [13]. The performance of such systems depends on the careful balance of thermodynamic factors (Gibbs free energy, energy efficiency) and electrochemical concepts (redox reactions, cell potential) [13]. Understanding these fundamental relationships is essential for designing appropriate robustness tests that accurately assess method reliability.

Critical Parameters in Electrochemical Methods

For electrochemical methods used in pharmaceutical analysis, critical parameters typically include applied potential, electrode material and surface condition, electrolyte composition and pH, temperature, and reference electrode stability [13]. The selection of factors for robustness testing should focus on those parameters most likely to vary during method transfer or routine use, with particular attention to interactions between parameters that may affect overall system performance.

The thermodynamic foundation of electrochemical systems, as described by the relationship between Gibbs free energy (ΔG) and cell potential (ΔEeq) where ΔG = -nFΔEeq (with n representing the number of electrons transferred and F the Faraday constant), provides a theoretical basis for understanding the sensitivity of these methods to parameter variations [13]. This relationship highlights why factors affecting reaction kinetics or charge transfer efficiency may significantly impact method performance and should be prioritized in robustness evaluations.

Comparative Analysis: Experimental Design and Data Interpretation

Design Selection for Different Method Types

The choice of experimental design for robustness testing depends on the number of factors to be investigated and considerations related to statistical interpretation. Two-level screening designs, particularly fractional factorial (FF) and Plackett-Burman (PB) designs, are most commonly employed as they allow examination of multiple factors in a relatively small number of experiments [11] [12].

Table 2: Comparison of Experimental Designs for Robustness Testing

| Design Type | Number of Experiments (N) | Maximum Factors | Key Features |

|---|---|---|---|

| Plackett-Burman | Multiple of 4 (8, 12, 16, etc.) | N-1 factors | Allows estimation of main effects only; unused columns serve as dummy factors [11] |

| Fractional Factorial | Power of 2 (8, 16, 32, etc.) | Varies with resolution | Can estimate some interaction effects along with main effects [11] |

For methods with a large number of potential factors, such as chromatographic techniques with multiple operational parameters, Plackett-Burman designs are particularly efficient. For example, an HPLC method with eight factors can be examined in a 12-experiment PB design, which also provides three dummy factors for statistical comparison [11]. The design selection should balance practical constraints (number of feasible experiments) with statistical needs (precision of effect estimation).

Response Selection and Evaluation Criteria

The selection of appropriate responses is crucial for meaningful robustness testing. For quantitative analytical methods, assay responses such as content determinations, recoveries, or impurity levels are primary concerns [12]. A method is considered robust when no statistically significant effects are found on these quantitative responses [11]. Additionally, system suitability test (SST) responses should be evaluated, particularly for separation-based techniques where parameters like retention times, resolution, theoretical plate numbers, and peak asymmetry factors provide critical indicators of method performance [11] [12].

Even when a method demonstrates robustness in its quantitative aspects, SST responses may still show significant effects from certain factors [11]. These effects inform the establishment of appropriate system suitability test limits, as recommended by ICH guidelines [10]. The resulting SST limits are thus based on experimental evidence rather than arbitrary selection, enhancing the scientific validity of the analytical procedure [12].

Implementation Workflow and Protocol Development

The execution of robustness tests requires careful planning and protocol development to ensure reliable, interpretable results. The following diagram illustrates the complete workflow for ruggedness and robustness testing, from initial planning through final implementation of control measures:

Experimental Execution and Data Quality Assurance

The execution phase of robustness testing requires meticulous attention to experimental conditions and data quality. Experiments should ideally be performed in random sequence to minimize the influence of uncontrolled variables [11]. However, when practical constraints or anticipated time effects (such as HPLC column aging) exist, alternative approaches like anti-drift sequences or blocking by factors may be employed [11].

To address potential drift issues, replicated experiments at nominal conditions can be incorporated at regular intervals throughout the experimental sequence [11]. The responses from these nominal experiments allow for drift correction of all design experiment responses, ensuring that the estimated factor effects are not biased by time-related changes [11]. For practical reasons, experiments may be blocked by certain factors, such as performing all experiments on one chromatographic column before switching to an alternative column [11].

The solutions analyzed during robustness testing should represent typical samples encountered during routine method application, including appropriate matrices and concentration ranges [11]. For methods with wide dynamic ranges, multiple concentration levels may be evaluated to ensure robustness across the validated range [12].

Essential Research Reagents and Materials

The experimental evaluation of ruggedness and robustness requires specific materials and reagents tailored to the analytical technique being validated. The following table details key research solutions and materials essential for conducting comprehensive robustness studies:

Table 3: Essential Research Reagents and Materials for Robustness Testing

| Category | Specific Examples | Function in Robustness Testing |

|---|---|---|

| Chromatographic Columns | Different batches, alternative manufacturers | Evaluate column-to-column variability and method transferability [11] |

| Mobile Phase Components | Different pH values, buffer concentrations, organic modifier ratios | Assess sensitivity to mobile phase preparation variations [11] [12] |

| Reference Standards | Drug substances, impurity standards, internal standards | Verify method performance across varied analytical conditions [11] |

| Electrochemical Components | Various membrane types, electrocatalyst materials, electrode configurations | Test performance with alternative materials in electrochemical methods [13] |

| Sample Matrices | Placebo formulations, simulated biological fluids | Evaluate matrix effects under varied method conditions [11] |

For electrochemical methods specifically, critical components include proton exchange membranes with varying thicknesses and reinforcement materials, electrocatalysts with different compositions and loadings (including non-precious metal alternatives to reduce costs), and gas diffusion layers with different structural properties [13]. These materials allow researchers to assess how variations in core electrochemical components affect method performance and durability.

Regulatory Compliance and Data Integrity Considerations

ALCOA+ Principles and Data Governance

The implementation of ruggedness and robustness testing must adhere to fundamental data integrity principles, particularly the ALCOA+ framework, which requires data to be Attributable, Legible, Contemporaneous, Original, and Accurate, with the "+" adding Complete, Consistent, Enduring, and Available [14]. In practical terms, this means that all robustness testing data must be traceable to specific analysts, recorded in real-time, maintained in original form, and protected from unauthorized modifications.

Regulators increasingly focus on data integrity during inspections, with common findings including inadequate audit trail reviews and poorly managed user accounts for electronic systems [14]. A 2023 analysis of FDA Form 483 observations revealed significant citations for poor system controls and missing metadata reviews, highlighting the importance of robust data governance in robustness testing programs [14]. Implementation of unique user logins, enabled audit trails, locked method versions, and required reason codes for any data changes are essential components of a compliance-focused robustness testing strategy [14].

Integration with Quality Management Systems

Ruggedness and robustness testing should be formally incorporated into the pharmaceutical quality management system (QMS) through standard operating procedures (SOPs), change control processes, and method transfer protocols [15]. The findings from robustness tests directly inform the establishment of system suitability test limits and control strategies for critical method parameters [10] [12].

Effective quality systems make compliance repeatable by turning GMP principles into daily habits: validated processes, clean facilities, trained personnel, and documented evidence [15]. According to current FDA perspectives, CDER's Site Catalog lists over 4,800 drug-manufacturing sites worldwide, with 94% of recent inspections resulting in No Action Indicated (NAI) or Voluntary Action Indicated (VAI) classifications, reflecting generally strong quality systems with targeted enforcement where gaps remain [15].

Ruggedness and robustness testing represent indispensable components of pharmaceutical quality control, providing scientific evidence that analytical methods will perform reliably when transferred between laboratories or implemented in routine use. Through carefully designed experiments and statistical analysis of factor effects, these tests identify critical method parameters that require control and inform the establishment of scientifically justified system suitability criteria.

The integration of robustness testing early in method development, combined with adherence to data integrity principles and regulatory expectations, creates a foundation for reliable analytical results throughout the drug product lifecycle. As analytical technologies evolve, particularly with the increasing adoption of electrochemical methods in pharmaceutical analysis, the fundamental approach to ruggedness and robustness testing remains essential for ensuring data quality, regulatory compliance, and ultimately, patient safety.

Electrochemical methods have emerged as powerful, sensitive, and cost-effective tools in pharmaceutical analysis, playing critical roles in drug development, quality control, and therapeutic monitoring [16]. The ruggedness and robustness of these methods—their ability to remain unaffected by small, deliberate variations in method parameters—is paramount in highly regulated pharmaceutical environments [17]. This guide objectively compares the performance of electrochemical setups by examining the foundational parameters that dictate their reliability: pH, electrode material, temperature, and incubation time. Understanding and controlling these variables is essential for developing robust analytical methods that generate consistent, accurate data for critical decisions in drug development.

Core Parameters & Experimental Performance Data

The performance of an electrochemical method is intrinsically linked to its operational parameters. The following section provides a comparative analysis of how pH, electrode material, temperature, and incubation time influence key analytical outcomes, supported by experimental data from pharmaceutical applications.

pH

The pH of the electrolyte solution is a critical parameter, as it directly influences the electrochemical behavior of analytes, including their electron transfer kinetics and thermodynamic potential.

Table 1: Impact of pH on Electrochemical Detection of Pharmaceuticals

| Analyte | Electrode Material | Optimal pH | Observed Effect | Detection Technique | Reference |

|---|---|---|---|---|---|

| Gemcitabine | Boron-Doped Diamond (BDD) | 5.0 (Highest current)7.4 (Physiological) | Oxidation current intensity peaks at pH 5, then decreases and stabilizes from pH 6-12. Oxidation potential decreases with increasing pH. | Differential Pulse Voltammetry (DPV) | [18] |

| Ephedrine-Type Alkaloids | Various Modified Electrodes | Variable | Electron transfer mechanisms, mass transport, and overall detection are heavily influenced by pH. | Voltammetric Techniques | [19] |

| Wound Status | Optical & Electrochemical Sensors | 4-6 (Acute Wounds)Up to 10 (Chronic Wounds) | Acidic environment promotes healing; alkaline environment indicates bacterial infection. | Colorimetric & Electrochemical Monitoring | [20] |

Experimental Protocol for pH Optimization: A standard protocol for determining the optimal pH involves preparing a series of standard analyte solutions in different buffering systems (e.g., Britton-Robinson buffer, phosphate-buffered saline) covering a broad pH range (e.g., 2-12) [18]. Using a fixed electrode material (e.g., BDDE) and a controlled temperature, voltammetric measurements (e.g., DPV or CV) are performed for each pH level. The resulting peak current (signal intensity) and peak potential are plotted against pH to identify the value that provides the highest sensitivity and most stable signal for subsequent quantitative analysis.

Electrode Material

The choice of working electrode material defines the electrochemical window, background current, sensitivity, and susceptibility to fouling.

Table 2: Comparison of Electrode Materials in Pharmaceutical Analysis

| Electrode Material | Key Advantages | Limitations / Performance Data | Exemplary Application |

|---|---|---|---|

| Boron-Doped Diamond (BDD) | Large potential window, low background current, reduced fouling, high stability. | Successfully detected Gemcitabine where glassy carbon, graphite, and platinum electrodes showed no signal [18]. | Direct detection of Gemcitabine in pharmaceutical formulations [18]. |

| Screen-Printed Electrodes (SPEs) | Disposable, compact, portable, versatile, ideal for miniaturization. | Deterioration of surface properties can occur when incubated in a humid CO2 environment for cell-based studies [21]. | Incubator-integrated platform for real-time cell monitoring and drug screening [21]. |

| Gold (Au) & Modified Electrodes | Good conductivity, facile surface modification with nanomaterials or polymers. | Required high pH values for Gemcitabine detection, making it impractical for direct analysis of physiological samples [18]. | Detection of exosomal RNAs from cancer cell lines [21]. |

| Carbon-Based Composites | Low cost, wide potential range, amenable to bulk modification. | May require additives like surfactants or complex modification steps (e.g., with Molecularly Imprinted Polymers) which can impact stability [18]. | Hybrids with metal oxides used for enhanced ephedrine detection [19]. |

Experimental Protocol for Electrode Material Selection: To compare electrode materials, prepare a standard solution of the target analyte at a known concentration in an optimal supporting electrolyte and pH. Perform identical voltammetric scans (e.g., CV or DPV) using different electrodes connected to the same potentiostat. Key performance metrics to compare include the signal-to-noise ratio, the sharpness of the peak (indicating efficiency), the reproducibility across multiple electrodes of the same type, and the ease of surface regeneration or cleaning for re-useable electrodes.

Temperature

Temperature affects the kinetics of electrochemical reactions, diffusion coefficients, and, in cell-based assays, the physiological status of biological components.

Table 3: Effects of Temperature in Electrochemical Analysis

| Analysis Context | Key Consideration | Impact on Performance & Robustness |

|---|---|---|

| Cell-Based Studies | Maintenance of physiologically relevant conditions (e.g., 37°C). | Cells experience stress when removed from incubator conditions (37°C), leading to altered behavior and inaccurate drug efficacy data [21]. |

| Solution-Based Drug Detection | Control of reaction kinetics and diffusion. | Higher temperatures generally increase diffusion rates and electron transfer kinetics, potentially enhancing signal strength. Uncontrolled fluctuations harm reproducibility. |

| Solution | Use of an incubator-integrated platform. | Maintains a constant 37°C and 5% CO2 environment during electrochemical testing of cells, preserving viability and ensuring data accuracy [21]. |

Experimental Protocol for Temperature Control: For routine analysis of drug compounds in solution, a temperature-controlled cell holder should be used to maintain a constant temperature (e.g., 25°C) throughout the analysis to ensure robustness. For cell-based assays, a more sophisticated setup is required. The incubator-integrated platform described in the literature consists of a microfluidic flow-cell housed within a custom incubator module that maintains a stable environment of 37°C and 5% CO2 for both the cells and the measurement solutions, bridging the gap between culture and testing environments [21].

Incubation Time

In cell-based electrochemical analysis, incubation time refers to the duration allowed for cells to adhere, proliferate, or respond to a stimulus on or near the electrode surface before measurement.

Performance Data: The integrity of the electrode surface can be compromised with extended incubation times in a humid CO2 incubator, leading to surface degradation and inconsistent results [21]. Furthermore, the adhesion and proliferation of cells on the electrode is a time-dependent process. For example, a 24-hour incubation period was used to ensure strong adhesion and organization of MCF-7 breast cancer cells on SPEs, which was critical for subsequent drug efficacy tests [21].

Experimental Protocol for Incubation Time Optimization: A sample preparation apparatus is used to incubate cells on the SPE surface inside a commercial incubator. This apparatus is designed to expose only the three-electrode configuration to the environment, preventing the rest of the electrical contacts from being affected by humidity. The incubation time is varied (e.g., 4, 12, 24, 48 hours) and the quality of cell adhesion and the subsequent electrochemical signal (e.g., from a redox mediator or impedance measurement) are assessed to determine the optimal duration for a stable and responsive cell layer [21].

Visualizing Experimental Workflows

Robustness Testing Pathway

The following diagram illustrates the logical workflow for evaluating the robustness of an electrochemical method through systematic parameter testing.

Integrated Incubation & Measurement Platform

This diagram outlines the architecture of a system designed to maintain critical parameters like temperature during cell-based electrochemical assays, a key consideration for robustness.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Reagents and Materials for Electrochemical Pharmaceutical Analysis

| Item | Function / Role in Robustness |

|---|---|

| Boron-Doped Diamond (BDD) Electrode | Provides a stable, wide-potential-window surface for direct oxidation of challenging pharmaceuticals like Gemcitabine, reducing fouling and enhancing reproducibility [18]. |

| Screen-Printed Electrodes (SPEs) | Disposable, all-in-one electrode cells that enable portability, miniaturization, and are ideal for single-use cell-based assays, preventing cross-contamination [21]. |

| Phosphate Buffered Saline (PBS) | A common supporting electrolyte that mimics physiological conditions (e.g., pH 7.4), crucial for generating biologically relevant data in drug detection [18]. |

| Britton-Robinson (BRB) Buffer | A universal buffering system used for foundational studies of pH influence on electrochemical behavior across a wide pH range (e.g., 2-12) [18]. |

| Sample Preparation Apparatus | A custom setup designed to hold SPEs during cell incubation, preventing medium evaporation and surface corrugation, thereby ensuring consistent cell adhesion and measurement baseline [21]. |

| Nanomaterial Composites (e.g., CNTs) | Used to modify electrode surfaces to dramatically enhance sensitivity and selectivity for specific analytes like ephedrine, improving the signal-to-noise ratio [19] [16]. |

| Molecularly Imprinted Polymers (MIPs) | Synthetic receptors incorporated into electrodes to provide high selectivity for target molecules, crucial for analyzing drugs in complex matrices [19]. |

In the rigorous field of pharmaceutical development, a robust regulatory framework ensures that medicines are safe, effective, and of high quality. This framework is built upon the complementary roles of three key organizations: the International Council for Harmonisation (ICH), the U.S. Food and Drug Administration (FDA), and the U.S. Pharmacopeia (USP). The ICH develops broad, international technical guidelines for drug development and registration. The FDA issues specific legal requirements and guidance for the U.S. market, often adopting ICH principles. The USP provides the enforceable, public quality standards—the documentary monographs and reference materials—against which drug products are tested. For scientists developing electrochemical methods, understanding this ecosystem is paramount. A method is only truly robust if it is validated according to ICH Q2(R1) principles, fulfills FDA submission expectations, and can consistently meet the acceptance criteria of the relevant USP monograph in a quality control laboratory. This guide objectively compares the performance of these three foundational pillars, providing a structured overview for researchers and drug development professionals navigating the complex journey from method development to regulatory approval.

Comparative Analysis of ICH, FDA, and USP

The following table summarizes the core objectives, outputs, and regulatory weight of ICH, FDA, and USP to clarify their distinct yet interconnected roles.

Table 1: Core Characteristics of ICH, FDA, and USP

| Aspect | International Council for Harmonisation (ICH) | U.S. Food and Drug Administration (FDA) | U.S. Pharmacopeia (USP) |

|---|---|---|---|

| Primary Role | Achieve harmonization of technical requirements for pharmaceuticals for human use. | Protect public health by ensuring the safety and efficacy of drugs and other products. | Create publicly available quality standards for medicines, dietary supplements, and food ingredients. |

| Nature of Documents | Guidelines (e.g., Q-Series for Quality). These are not legally binding but are widely adopted by regulators. | Guidances (current thinking) and Regulations (legal requirements, e.g., 21 CFR). | Compendial Standards (monographs, general chapters). These are legally recognized as enforceable standards in the U.S. under the Food, Drug, and Cosmetic Act. |

| Geographic Scope | Global (members from EU, Japan, USA, Canada, Switzerland, and others). | National (United States), but its influence is global. | National (legally recognized in the U.S.), but used globally as a quality benchmark. |

| Key Outputs | - ICH Q1 (Stability Testing) [22]- ICH Q2(R1) (Validation of Analytical Procedures)- ICH Q3 (Impurities) [22]- ICH M13A (Bioequivalence) [22] | - Newly Added Guidance Documents (e.g., on Biosimilars, Quality) [22] [23]- Product-Specific Guidances (PSGs) for Generics [24]- Regulations (CFR Title 21) | - USP-NF (United States Pharmacopeia – National Formulary)- Reference Standards (physical materials) [25]- General Chapters (e.g., <711> Dissolution, <85> Endotoxins) [26] |

| Enforcement | Adopted into the regulatory framework of member regions. | Enforced by law. Failure to comply with regulations or relevant guidance can lead to application rejection or regulatory action. | Enforced by the FDA. A drug that fails to comply with USP standards where applicable may be deemed adulterated. |

Experimental Protocols for Regulatory Validation

To transition an electrochemical method from research to a regulatory-compliant quality control tool, its validation and application must align with ICH, FDA, and USP expectations. The following protocols outline key experiments.

Protocol 1: Ruggedness Testing of an Electrochemical Method for Drug Substance Assay per ICH Q2(R1)

1. Objective: To demonstrate the reliability of an electrochemical assay (e.g., for Apixaban) when subjected to deliberate variations in analytical conditions, as required by ICH Q2(R1) guidelines on method validation.

2. Methodology:

- Analytical Procedure: Utilize a validated differential pulse voltammetry (DPV) method on a pharmaceutical sample.

- Variations Tested: Systematically alter key method parameters one at a time (OFAT) from their nominal values. Variations include:

- pH of the supporting electrolyte: ± 0.5 pH units.

- Scan rate: ± 10%.

- Instrument (HPLC with electrochemical detector): Use two different models from different manufacturers.

- Analyst: Two different qualified analysts perform the analysis on different days.

- Evaluation: For each variation, prepare and analyze a sample set in triplicate from a single homogeneous batch of the drug substance. The primary outcomes are the assay percentage and the peak shape (e.g., half-peak width).

3. Data Analysis: The method is considered rugged if the results (assay value) obtained under all varied conditions remain within the pre-defined acceptance criteria (e.g., ±2.0% of the nominal value and RSD <2.0%) and no significant degradation in peak shape is observed.

Protocol 2: Verification of a Compendial Procedure for Dissolution Testing

1. Objective: To verify that a developed in-house electrochemical method is suitable for testing a specific drug product against the acceptance criteria of its USP monograph, as per FDA Q&A on dissolution [26].

2. Methodology:

- Reference to Compendia: Identify the dissolution medium, apparatus, and rotation speed specified in the relevant USP monograph (e.g.,

USP General Chapter <711>) [26]. - Sample Preparation: Use a standard basket (Apparatus 1) or paddle (Apparatus 2) setup. For an extended-release product, a USP Apparatus 3 (reciprocating cylinder) may be specified.

- Electrochemical Analysis: At specified time points, withdraw dissolution medium and analyze using the DPV method. This demonstrates the method's suitability in a complex matrix.

- Justification of Suitability: The report must include data on the robustness of the analytical method (as per Protocol 1), specificity (no interference from tablet excipients or degraded products), and demonstration of the discriminating ability of the overall dissolution method.

3. Data Analysis: The verification is successful if the dissolution profile obtained with the electrochemical method meets the monograph's acceptance criteria (e.g., Q=80% in 30 minutes) and all system suitability parameters are met, proving the method is "fit-for-purpose" in a QC environment.

Signaling Pathways and Logical Relationships

The journey of an analytical method from conception to regulatory acceptance involves a structured, interdependent process. The diagram below maps this pathway, highlighting the critical decision points and the distinct roles played by ICH, FDA, and USP.

Diagram 1: Method Regulatory Pathway

The Scientist's Toolkit: Key Reagents and Materials

The following table details essential research reagent solutions and materials critical for conducting ruggedness and robustness testing of electrochemical pharmaceutical methods in a regulatory context.

Table 2: Essential Research Reagent Solutions for Robustness Testing

| Item | Function in Experiment | Regulatory Consideration |

|---|---|---|

| USP Reference Standard | Highly characterized specimen of the drug substance used to qualify the analytical procedure and prepare calibration standards [25]. | Essential for demonstrating method accuracy and generating defensible data. Use of a non-USP RS requires full justification and characterization data. |

| Qualified Impurities | Physicochemical specimens of known and potential degradation products or process-related impurities. | Critical for establishing the specificity and stability-indicating properties of the method, as required by ICH Q3 guidelines [22]. |

| Pharmaceutical Grade Excipients | High-quality components of the drug product formulation (e.g., lactose, magnesium stearate). | Used in placebo mixtures to prove method specificity—that the analyte signal is not interfered with by the sample matrix, a key ICH Q2(R1) validation parameter. |

| System Suitability Standards | A control preparation(s) used to verify that the chromatographic or electrochemical system is performing adequately at the time of the test. | A core requirement of USP general chapters. System suitability tests must be met before sample data can be considered valid [26]. |

| Buffer Components & Electrolytes | High-purity salts and chemicals for preparing the supporting electrolyte and mobile phases. | The pH and ionic strength of the electrolyte are critical method parameters. Their variation is a core part of robustness/ruggedness testing per ICH Q2(R1). |

A Practical Framework: Implementing Robustness and Ruggedness Tests with DoE and AQbD

Design of Experiments (DoE) represents a systematic, rigorous method for planning and conducting experiments to efficiently investigate the relationship between multiple input factors and output responses [27]. In the context of pharmaceutical development, particularly for electrochemical analytical methods, DoE provides a structured framework to replace the traditional "One Factor at a Time" (OFAT) approach, which is inefficient and fails to identify interactions between factors [28]. A well-executed DoE enables researchers to establish cause-and-effect relationships, optimize processes, and build predictive models with minimal experimental runs, thereby accelerating method development while ensuring reliability and regulatory compliance [29] [27].

For electrochemical pharmaceutical methods, DoE is particularly valuable during method validation, where understanding the combined impact of multiple analytical parameters on method performance is crucial for establishing robustness and ruggedness [2]. This approach allows scientists to quantitatively determine how variations in method parameters (e.g., pH, mobile phase composition, temperature) affect critical quality attributes, providing a scientific basis for setting system suitability specifications and control limits.

Fundamental Principles of Experimental Design

The foundation of modern experimental design rests on principles established by Sir Ronald Fisher, which remain essential for conducting valid and reliable experiments [27]:

- Comparison: Experiments should be structured to enable meaningful comparisons between treatments, typically against a control or baseline condition that represents the current standard or untreated state [27].

- Randomization: The random assignment of experimental units to different treatment groups helps mitigate the effects of confounding variables and ensures that uncontrolled factors are distributed randomly across treatments [27].

- Replication: Repeating experimental measurements or conditions allows researchers to estimate natural variation and measurement uncertainty, providing more reliable estimates of treatment effects [27].

- Blocking: Organizing experimental units into homogeneous groups (blocks) reduces known sources of variation, thereby increasing the precision of effect estimation [27].

- Orthogonality: Designing contrasts and comparisons to be statistically independent ensures that factor effects can be estimated separately without correlation [27].

These principles work synergistically to minimize bias, control experimental error, and ensure that results are both statistically sound and scientifically defensible—a critical consideration in regulated pharmaceutical environments.

Key Types of Experimental Designs and Their Applications

Experimental designs can be categorized into several families based on their primary objectives and the stage of investigation [29] [30]. The selection of an appropriate design depends on the experimental goal, the number of factors to be investigated, and available resources [30].

Comparative Designs

Purpose: To determine whether a specific factor produces a statistically significant effect on the response variable [30].

Applications: Initial method development stages, verifying critical factors, comparing alternative methods or instruments.

Design Approaches: Completely randomized designs for single factors, randomized block designs when dealing with known nuisance variables [31].

Screening Designs

Purpose: To identify the few significant factors from many potential factors [30].

Applications: Early-stage method development when numerous factors may influence the analytical method; identifying critical process parameters.

Common Designs:

- Full Factorial Designs: Investigate all possible combinations of factors and levels, enabling estimation of all main effects and interactions [29].

- Fractional Factorial Designs: Examine a carefully selected subset of full factorial combinations, assuming higher-order interactions are negligible [29].

- Plackett-Burman Designs: Highly efficient for screening large numbers of factors with minimal runs when only main effects are of interest [30].

Table 1: Comparison of Screening Design Types

| Design Type | Factors | Runs | Effects Estimated | Key Considerations |

|---|---|---|---|---|

| Full Factorial | 2-5 | 2^k (k=factors) | All main effects and interactions | Requires more resources; comprehensive |

| Fractional Factorial | 5+ | 2^(k-p) | Main effects and lower-order interactions | Aliasing of effects; resolution indicates clarity |

| Plackett-Burman | 5+ | Multiple of 4 | Main effects only | Assumes no interactions; screening only |

Response Surface Methodology (RSM) Designs

Purpose: To model and optimize processes by estimating interaction and quadratic effects [29] [30].

Applications: Method optimization, finding optimal process settings, making processes robust against uncontrollable influences [30].

Common Designs:

- Central Composite Designs (CCD): Combine factorial points with center and axial points to estimate curvature [29].

- Box-Behnken Designs: Efficient three-level designs that avoid extreme factor combinations while still estimating quadratic effects [30].

Space-Filling Designs

Purpose: To broadly explore experimental spaces with minimal assumptions about the underlying model structure [29].

Applications: Preliminary investigation of new analytical systems, computer experiments, systems with limited prior knowledge.

Key Feature: These designs sample factors at many different levels across the entire experimental region without assuming a specific model form [29].

DoE Selection Framework for Pharmaceutical Analysis

The choice of experimental design should align with both the experimental objectives and the number of factors under investigation [30]. The following table provides a structured framework for design selection in the context of electrochemical pharmaceutical methods:

Table 2: Experimental Design Selection Guide

| Number of Factors | Comparative Objective | Screening Objective | Response Surface Objective |

|---|---|---|---|

| 1 | 1-factor completely randomized design | - | - |

| 2-4 | Randomized block design | Full or fractional factorial | Central composite or Box-Behnken |

| 5 or more | Randomized block design | Fractional factorial or Plackett-Burman | Screen first to reduce number of factors |

This framework emphasizes a sequential approach to experimentation, where screening designs first identify critical factors before more resource-intensive optimization designs are employed [29] [30]. This strategy ensures efficient resource utilization while building comprehensive process understanding—a key element of Quality by Design (QbD) initiatives in pharmaceutical development [27].

Application to Ruggedness and Robustness Testing

In analytical chemistry, particularly for electrochemical pharmaceutical methods, robustness and ruggedness testing are critical validation requirements that ensure method reliability under normal operational variations [2].

Robustness Testing

Definition: The deliberate, systematic examination of an analytical method's performance when subjected to small, premeditated variations in its parameters [2].

Experimental Approach:

- Utilize fractional factorial or Plackett-Burman designs to efficiently evaluate multiple parameters simultaneously [2].

- Test method parameters at slightly different levels (e.g., pH ±0.1-0.2 units, flow rate ±5-10%, temperature ±2-5°C) [2].

- Measure impact on critical method attributes (retention time, peak area, resolution, etc.).

Objective: Identify which method parameters are most sensitive to variation and establish permissible operating ranges [2].

Ruggedness Testing

Definition: Assessment of method reproducibility under varying real-world conditions, including different analysts, instruments, laboratories, or days [2].

Experimental Approach:

- Employ randomized block designs to account for known sources of variation (analyst, instrument, day) [31] [27].

- Include center points to assess stability over time [29].

- Conduct inter-laboratory studies when method transfer is anticipated.

Objective: Demonstrate that the method produces consistent results when applied under different normal use conditions [2].

Table 3: Comparison of Robustness vs. Ruggedness Testing

| Feature | Robustness Testing | Ruggedness Testing |

|---|---|---|

| Purpose | Evaluate method performance under small, deliberate parameter variations | Evaluate method reproducibility under real-world environmental variations |

| Scope | Intra-laboratory, during method development | Inter-laboratory, often for method transfer |

| Variations | Small, controlled changes (e.g., pH, flow rate) | Broader environmental factors (e.g., analyst, instrument, day) |

| Timing | Early in method validation | Later in validation, often before method transfer |

| Key Question | How well does the method withstand minor tweaks? | How well does the method perform in different settings? |

Experimental Protocol: Robustness Testing for an Electrochemical Method

The following protocol outlines a systematic approach to robustness testing using fractional factorial design:

Pre-Experimental Planning

- Define Critical Method Parameters: Identify 5-7 potentially influential factors (e.g., buffer pH, electrolyte concentration, scan rate, temperature, electrode conditioning time).

- Select Response Metrics: Determine critical quality attributes (e.g., peak current, peak potential, detection limit, quantification precision).

- Establish Factor Ranges: Set appropriate high/low levels for each factor based on preliminary knowledge (±5-10% of nominal values).

- Choose Experimental Design: Select a resolution IV or V fractional factorial design to estimate main effects clearly while minimizing run numbers.

Experimental Execution

- Randomize Run Order: Execute experimental runs in random order to minimize time-related bias [27].

- Include Center Points: Incorporate 3-5 center point runs throughout the experiment to check for curvature and estimate pure error [29].

- Control Constant Factors: Maintain all non-studied parameters at constant levels throughout the experiment.

- Replicate Critical Conditions: Repeat center points and selected factor combinations to estimate experimental error.

Data Analysis and Interpretation

- Statistical Analysis: Perform analysis of variance (ANOVA) to identify statistically significant effects (p < 0.05 typically).

- Effect Estimation: Calculate and visualize main effects and two-factor interactions.

- Model Building: Develop predictive models for critical responses if appropriate.

- Establish Control Ranges: Define acceptable operating ranges for significant parameters based on response requirements.

Visualization of Experimental Design Workflows

Essential Research Reagent Solutions for Electrochemical Pharmaceutical Analysis

Table 4: Key Research Reagents and Materials for Electrochemical Methods

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Buffer Solutions | Maintain consistent pH for reproducible electrochemical measurements | Critical for robustness; pH variations significantly affect results |

| Electrolyte Salts | Provide ionic conductivity in solution | Concentration and composition affect electron transfer kinetics |

| Standard Reference Materials | Calibrate instruments and verify method accuracy | Certified reference materials ensure measurement traceability |

| Electrode Cleaning Solutions | Maintain consistent electrode surface properties | Essential for reproducible electrode performance |

| Anti-fouling Agents | Prevent adsorption of interfering substances on electrode surfaces | Improve method robustness for complex samples |

| Redox Mediators | Facilitate electron transfer in complex systems | Enhance sensitivity and selectivity for specific analytes |

Systematic experimental design provides pharmaceutical scientists with a powerful framework for developing, optimizing, and validating robust electrochemical analytical methods. By replacing inefficient OFAT approaches with structured multivariate designs, researchers can comprehensively understand method behavior while conserving resources. The sequential application of screening, optimization, and validation designs aligns perfectly with Quality by Design principles, enabling science-based establishment of method operable design regions. For electrochemical methods specifically, this approach systematically addresses both robustness (resistance to parameter variations) and ruggedness (reproducibility across different conditions), ultimately delivering reliable, transferable analytical procedures that ensure drug product quality and patient safety.

In the pharmaceutical industry, the paradigm for ensuring analytical quality has fundamentally shifted from a reactive to a proactive approach. Analytical Quality by Design (AQbD) represents a systematic framework for developing analytical methods that are fit-for-purpose, robust, and well-understood throughout their entire lifecycle [32] [33]. Unlike traditional quality-by-testing (QbT) methodologies that rely on fixed conditions with limited understanding of variability, AQbD emphasizes proactive risk management and scientific understanding to build quality directly into analytical methods [32] [34]. This approach, endorsed by regulatory bodies including the FDA and articulated in ICH guidelines Q8-Q14, directly links method robustness to effective lifecycle management, ensuring methods consistently produce reliable results despite minor, inevitable variations in execution [35] [36].

The core objective of AQbD is to establish a Method Operable Design Region (MODR)—a multidimensional combination of analytical factors and parameter ranges within which method performance consistently meets predefined criteria [37] [35]. Operating within the MODR provides regulatory flexibility, as changes to method parameters within this validated space do not typically require revalidation or regulatory notification [32] [35]. This article compares the AQbD paradigm against traditional approaches, providing experimental data and detailed protocols that demonstrate how a science- and risk-based framework leads to more rugged and easily managed analytical methods, with a specific focus on chromatographic applications in pharmaceutical development.

Core Principles: Traditional vs. AQbD Approach

The fundamental differences between traditional and AQbD approaches lie in their philosophy, development process, and long-term management strategy.

Table 1: Comparison of Traditional Analytical Method Development and the AQbD Approach

| Aspect | Traditional Approach (QbT) | Enhanced AQbD Approach |

|---|---|---|

| Philosophy | "Quality by Testing"; reactive; fixed point | "Quality by Design"; proactive; flexible region [32] [35] |

| Development Method | Often One-Factor-at-a-Time (OFAT); trial-and-error [32] | Systematic, based on Risk Assessment & Design of Experiments (DoE) [37] [38] |

| Primary Focus | Meeting validation criteria at a fixed set of conditions [36] | Understanding the entire method response and controlling Critical Method Parameters (CMPs) [33] |

| Control Strategy | Fixed operational conditions; rigid | Method Operable Design Region (MODR); flexible within the proven acceptable range [37] [35] |

| Lifecycle Management | Post-approval changes often require regulatory submission [35] | Changes within MODR are managed under a company's quality system, enabling continuous improvement [32] [36] |

| Robustness | Tested late in development, often univariate | Understood early and built-in via multivariate experiments [9] [35] |

The traditional OFAT approach, while straightforward, fails to capture parameter interactions and often results in a method that is fragile when transferred to different laboratories or instruments [35]. In contrast, the AQbD workflow is a holistic, iterative process that begins by defining what the method is intended to measure—the Analytical Target Profile (ATP)—and employs risk assessment and multivariate DoE to understand the relationship between method parameters and performance attributes, ultimately defining a MODR that guarantees robustness [32] [37] [33].

The AQbD Workflow: A Systematic Journey

The following diagram illustrates the core lifecycle of an analytical procedure developed under the AQbD paradigm, from initial definition to continuous monitoring.

Experimental Data & Comparative Case Studies

Case Study 1: AQbD for Cephalosporin Analysis by HPLC

A recent study developed an HPLC method for the identification and quantification of different cephalosporins and their degradation products using AQbD principles [37]. The workflow integrated an in-silico prediction tool to guide initial development, minimizing experimental trials.

- ATP Definition: The ATP was defined as an isocratic HPLC procedure capable of identifying and quantifying multiple cephalosporins (e.g., CFZ, CFM, CFX, CPL) in the range of 90–110% of target concentration (0.4 mg/mL), simultaneously resolving them from their degradation products. Key performance criteria included a minimum of 2500 theoretical plates, peak asymmetry between 0.5–2.0, and a maximum run time of 20 minutes [37].

- Risk Assessment & DoE: A risk assessment identified Critical Process Parameters (CPPs), including mobile phase pH, column temperature, and gradient time. A virtual DoE was used for initial screening, followed by experimental optimization to define the MODR [37].

- MODR & Control Strategy: The MODR was established using Monte Carlo simulations to determine the multidimensional region where the probability of meeting all ATP criteria was highest. The final method was validated within this region, proving robust and flexible for its intended use [37].

Case Study 2: AQbD for a Botanical Drug Substance

Another study applied AQbD to the complex analysis of a medicinal plant, Picrorhiza scrophulariiflora Pennell, for the quantification of its active constituent, Picroside II [39].

- ATP & Challenges: The complexity of the plant matrix, with multiple phytochemicals, presented a significant challenge. The ATP focused on the specific, precise, and accurate quantification of Picroside II in bulk and pharmaceutical dosage forms [32] [39].

- DoE & Optimization: A Box-Behnken Design (BBD) was employed to systematically optimize critical parameters. The factors investigated were the concentration of formic acid in the aqueous phase, the percentage of acetonitrile in the mobile phase, and the flow rate. The responses monitored were retention time, theoretical plates, and tailing factor [39].

- Outcome: The optimized method used a Waters XBridge C18 column with a mobile phase of 0.1% formic acid and acetonitrile (77:23 v/v) at a flow rate of 1.0 mL/min. The method was specific, precise (% RSD < 2%), linear (6–14 μg/mL), and robust (% RSD < 1%), successfully passing forced degradation studies [39].

Table 2: Summary of Experimental Outcomes from AQbD Case Studies

| Study & Analytic | Defined MODR (Key Parameters) | Final Method Performance | Demonstrated Robustness |

|---|---|---|---|

| Cephalosporins & Degradants [37] | Multidimensional combination of mobile phase pH, column temperature, and gradient time. | Met all ATP criteria: Resolution >2.0, plates >2500, asymmetry 0.8-1.5. | Method performed reliably across all parameter variations within the MODR. |

| Picroside II in Plant Extract [39] | Mobile phase composition (ACN: 0.1% FA) ~ (23:77), Flow rate ~1.0 mL/min. | Retention time: 6.0-6.2 min, Precision (% RSD): <2%, Assay: 99.46%. | Robustness tested by deliberate variations; % RSD <1%. |

The Scientist's Toolkit: Essential Reagents & Materials

Successful implementation of AQbD relies on a set of foundational tools and materials. The following table details key research reagent solutions and their functions in developing robust analytical methods.

Table 3: Essential Research Reagent Solutions for AQbD-based Chromatographic Method Development

| Reagent / Material | Function in AQbD Development | Application Notes |

|---|---|---|

| HPLC/UHPLC System with Diode Array Detector | The core instrumental platform for separation, identification, and quantification of analytes. | Enables high-resolution separation and peak purity assessment, critical for method specificity [39]. |

| Chromatography Data Software | Manages data acquisition, processing, and reporting. Essential for analyzing large DoE datasets. | Software with DoE capabilities (e.g., Design Expert) is used for modeling and defining the MODR [39]. |

| C18 Reversed-Phase Columns | The stationary phase for separating non-polar to moderately polar compounds. A common choice for API and impurity profiling. | Different brands and lots of C18 columns are often evaluated during risk assessment to ensure method ruggedness [38] [33]. |

| HPLC-Grade Organic Modifiers (Acetonitrile, Methanol) | Components of the mobile phase to control elution strength and selectivity. | The choice between acetonitrile and methanol is a key variable screened in early AQbD development [38] [33]. |

| Buffer Salts & pH Adjusters (e.g., Formic Acid, Phosphate Salts) | Used to prepare mobile phase buffers, controlling pH and ionic strength to optimize separation and peak shape. | Mobile phase pH is often identified as a Critical Method Parameter (CMP) with a high impact on selectivity and resolution [37] [39]. |

| Forced Degradation Reagents (Acid, Base, Oxidant) | Used in stress studies to generate degradation products, validating the method's stability-indicating power. | Forced degradation with LC-MS is used to identify degradants and ensure method specificity as part of the ATP [37]. |

Detailed Experimental Protocol: Implementing AQbD for an HPLC Method

The following workflow, adapted from the literature, provides a general protocol for developing an RP-HPLC method using AQbD principles [33].

Phase 1: Define the Analytical Target Profile (ATP)

- Define Purpose: Clearly state what the method must measure (e.g., "quantify drug substance X and its related impurities Y and Z in film-coated tablets").

- Set Performance Criteria: Define joint accuracy and precision requirements. Example ATP: "The method must quantify the analyte over 70-130% of nominal concentration with reported measurements within ±3.0% of the true value with ≥95% probability" [33].

- Define other attributes: Set criteria for range, detection limit, and robustness based on the method's intended use.

Phase 2: Select Technique and Initial Risk Assessment

- Technique Selection: Choose an appropriate technique (e.g., RP-HPLC with UV detection) capable of meeting the ATP.

- Method Deconstruction: Break the method into Analytical Unit Operations (e.g., sample preparation, chromatographic separation, data analysis).

- Risk Identification: Use a tool like an Ishikawa (fishbone) diagram or a Failure Mode Effects Analysis (FMEA) to identify all potential factors (method parameters) that could affect the Critical Method Attributes (CMAs) like resolution, precision, and tailing factor [32] [33].

Phase 3: Screening and Optimization via Design of Experiments (DoE)

- Screening DoE: Use a fractional factorial or Plackett-Burman design to screen a large number of factors (e.g., mobile phase pH, gradient time, column temperature, flow rate) to identify the few that are truly critical [37] [38].

- Optimization DoE: For the critical parameters (typically 2-3), employ a response surface design like Box-Behnken or Central Composite Design to model their interaction effects on the CMAs [39].

- Modeling & MODR Definition: Use statistical software to fit a multiple regression model to the data. The MODR is defined as the combination of parameter ranges where the probability of meeting all ATP criteria is acceptably high (e.g., >90% or >95%) [37] [35].

Phase 4: Control Strategy and Validation

- Set Control Strategy: Define system suitability tests (SSTs) derived from the DoE models to ensure the method is performing as expected every time it is used.

- Validate the Method: Perform a formal validation (specificity, linearity, accuracy, precision, LOD/LOQ, robustness) according to ICH Q2(R1) at a working point within the MODR [39].

Phase 5: Lifecycle Management

- Ongoing Monitoring: Continuously collect performance data (e.g., SST results) to verify the method remains in a state of control.

- Continuous Improvement: Use the knowledge and MODR to make controlled, science-based adjustments to the method within the design space without requiring regulatory prior approval [32] [36].

The AQbD paradigm represents a fundamental and necessary evolution in pharmaceutical analytical science. By moving from a fixed-point, reactive mindset to a systematic, proactive framework based on scientific understanding and risk management, AQbD directly links method robustness to effective lifecycle management. The experimental data and case studies presented demonstrate that methods developed under AQbD are inherently more robust, easier to transfer, and provide regulatory flexibility through the establishment of a MODR. While its implementation requires an upfront investment in knowledge and statistical expertise, the long-term benefits—reduced out-of-specification (OOS) results, fewer post-approval variations, and a deeper understanding of the analytical procedure—make AQbD the superior approach for ensuring the quality, efficacy, and safety of pharmaceutical products throughout their lifecycle.

In pharmaceutical analysis, where results carry significant weight for drug safety and regulatory compliance, the concepts of ruggedness and robustness are central to method validation. Robustness is defined as "a measure of a method's capacity to remain unaffected by small, but deliberate variations in method parameters and provides an indication of its reliability during normal usage" [10] [11]. Ruggedness, while sometimes used interchangeably with robustness, is more frequently described as "the degree of reproducibility of test results obtained by the analysis of the same sample under a variety of normal test conditions," such as different laboratories, analysts, instruments, or reagents [10] [3]. Essentially, robustness tests a method's resilience to internal, controlled parameter changes, while ruggedness often relates to its performance across external, operational variations. Regulatory bodies like the FDA and ICH emphasize these tests to ensure analytical methods produce reliable data under the varied conditions encountered during transfer between laboratories and in routine use [10] [3]. This guide provides a structured, step-by-step approach for scientists to systematically select critical factors and define their acceptable ranges, thereby strengthening method reliability for electrochemical and other analytical techniques.

Foundational Concepts and Definitions

Regulatory and Scientific Definitions

- ICH Definition of Robustness: "The robustness of an analytical procedure is a measure of its capacity to remain unaffected by small, but deliberate variations in method parameters and provides an indication of its reliability during normal usage." This definition treats ruggedness and robustness as synonyms [10] [11].

- USP Definition of Ruggedness: "The ruggedness of an analytical method is the degree of reproducibility of test results obtained by the analysis of the same sample under a variety of normal test conditions, such as different laboratories, different analysts, different instruments, different lots of reagents, different elapsed assay times, different assay temperatures, different days, etc." This aligns more closely with concepts of intermediate precision [10].

- Youden and Steiner Approach: This historical perspective uses the term "ruggedness" for tests that deliberately examine the influence of controlled changes in method parameters to detect factors with a large influence before an interlaboratory study [10].

The Criticality of Factor Selection and Ranges