A Practical Guide to LOD and LOQ in Electrochemical Assays: From Foundational Concepts to Advanced Applications in Biomedical Research

This comprehensive article addresses the critical challenge of accurately determining the Limit of Detection (LOD) and Limit of Quantification (LOQ) in electrochemical assays, a fundamental requirement for researchers, scientists, and...

A Practical Guide to LOD and LOQ in Electrochemical Assays: From Foundational Concepts to Advanced Applications in Biomedical Research

Abstract

This comprehensive article addresses the critical challenge of accurately determining the Limit of Detection (LOD) and Limit of Quantification (LOQ) in electrochemical assays, a fundamental requirement for researchers, scientists, and drug development professionals. It systematically explores the foundational definitions and importance of these analytical figures of merit, compares prevalent calculation methodologies, and provides practical strategies for troubleshooting and optimization in complex matrices. Further, it details validation protocols and comparative analyses of sensor platforms, with a specific focus on applications in pharmaceutical analysis, clinical diagnostics, and cardiotoxicity screening. By synthesizing current guidelines, experimental approaches, and real-world case studies, this guide aims to establish robust, reliable, and standardized practices for characterizing electrochemical sensor sensitivity, ultimately supporting the development of fit-for-purpose analytical methods in biomedical and clinical research.

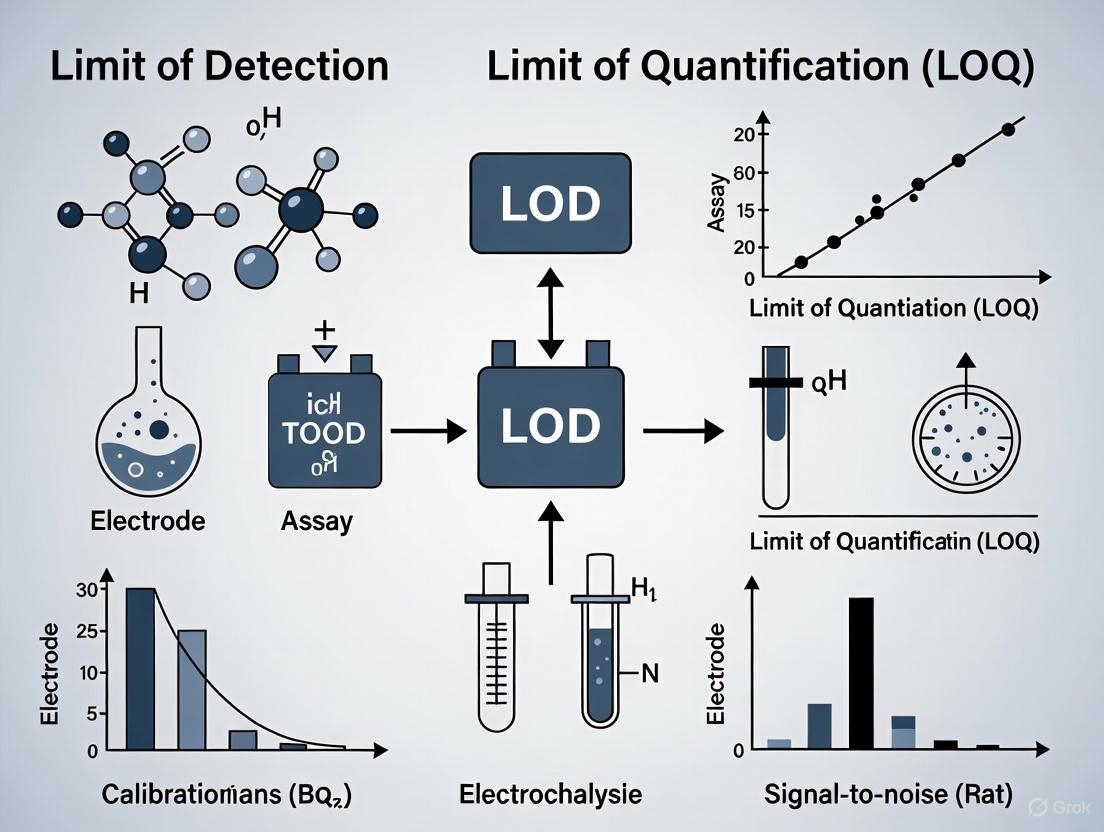

Understanding LOD and LOQ: Core Concepts and Significance in Electroanalytical Chemistry

In analytical chemistry, particularly in the development and validation of electrochemical assays, understanding the lowest levels of analyte that can be reliably detected and measured is fundamental to ensuring data quality and regulatory compliance. The Limit of Detection (LOD) and Limit of Quantification (LOQ) are two critical performance characteristics that define the sensitivity and utility of an analytical method [1] [2]. The LOD represents the lowest concentration of an analyte that can be reliably distinguished from the analytical background noise (the blank), though not necessarily quantified with precise accuracy [3]. In practical terms, it answers the question: "Is there any analyte present at all?" In contrast, the LOQ represents the lowest concentration at which the analyte can not only be detected but also quantified with acceptable precision and accuracy under stated experimental conditions [1] [4]. It answers the more demanding question: "Exactly how much analyte is present?"

The proper determination of these parameters is especially crucial in electrochemical biosensing and pharmaceutical research, where decisions regarding drug purity, impurity profiling, and diagnostic outcomes often depend on measurements made at the extreme lower end of the concentration range [5]. Electrochemical biosensors are particularly valued in this context for their low LOD, high specificity, and potential for miniaturization into point-of-care devices [5] [6]. The clarity in defining and determining LOD and LOQ ensures that methods are "fit for purpose," meaning they possess the necessary sensitivity to detect and quantify analytes at clinically or toxicologically relevant levels [1].

Defining the Fundamental Concepts

Limit of Blank (LoB): The Starting Point

Before delving into LOD and LOQ, it is essential to understand the Limit of Blank (LoB), a related but distinct parameter. The LoB is defined as the highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested [1]. It essentially describes the background noise of the analytical system. In a perfectly stable system, the results from analyzing a blank sample will fluctuate, and the LoB establishes the upper threshold of these fluctuations. Statistically, it is calculated as the mean blank signal plus 1.645 times its standard deviation (for a one-sided 95% confidence interval): LoB = meanblank + 1.645(SDblank) [1]. This means that only 5% of blank measurements would be expected to exceed this LoB value, creating a false positive (a Type I error).

Limit of Detection (LOD): The Decision Limit

The Limit of Detection (LOD) is the next critical threshold. It is the lowest analyte concentration that can be reliably distinguished from the LoB with a stated degree of confidence [1] [2]. While a sample at the LOD concentration produces a signal that is statistically different from a blank, the measurement at this level is typically too imprecise for accurate quantification. The LOD acknowledges that samples with very low analyte concentrations will sometimes produce signals below the LoB, leading to a false negative (a Type II error) [1]. The established clinical and laboratory standards institute (CLSI) protocol EP17 defines LOD using both the LoB and data from a low-concentration sample: LOD = LoB + 1.645(SD_low concentration sample) [1]. This formula ensures that 95% of measurements from a sample at the LOD concentration will exceed the LoB, minimizing false negatives.

Limit of Quantification (LOQ): The Level for Reliable Measurement

The Limit of Quantification (LOQ) represents a higher standard of performance. It is the lowest concentration at which the analyte can not only be detected but also measured with predefined levels of bias and imprecision (i.e., acceptable accuracy and precision) [1] [4]. The LOQ cannot be lower than the LOD and is often found at a much higher concentration [1]. The requirements for precision at the LOQ are stricter, often defined by an acceptable percent coefficient of variation (%CV), such as 20% or lower, depending on the application [1]. In many contexts, the term "functional sensitivity" is used synonymously with the LOQ, defined specifically as the concentration that yields a CV of 20% [1]. The relationship between these three fundamental limits is progressive: LoB establishes the noise floor, LOD confirms a signal can be distinguished from that noise, and LOQ ensures that signal can be measured with reliability.

Comparative Analysis of LOD and LOQ

The following table provides a consolidated comparison of the key characteristics of LoB, LOD, and LOQ, summarizing their purposes, statistical foundations, and implications for analytical science.

Table 1: Comparative characteristics of Blank, Detection, and Quantification Limits

| Parameter | Definition | Typical Statistical Basis | Primary Question Answered | Implication for a Measurement |

|---|---|---|---|---|

| Limit of Blank (LoB) | Highest apparent concentration expected from a blank sample [1]. | meanblank + 1.645(SDblank) [1] | Could the signal be explained by system noise? | A result > LoB suggests analyte might be present. |

| Limit of Detection (LOD) | Lowest concentration reliably distinguished from the LoB [1]. | LoB + 1.645(SD_low concentration) or 3.3σ/S [1] [7] | Is the analyte present with statistical confidence? | A result > LOD confirms detection, but not precise amount. |

| Limit of Quantification (LOQ) | Lowest concentration measurable with acceptable accuracy and precision [1] [4]. | 10σ / S [7] [4] | How much analyte is present with acceptable certainty? | A result > LOQ is considered reliably quantifiable. |

Decision Workflow for Analytical Results

The logical relationship between sample concentration, the analytical process, and the interpretation of results based on LoB, LOD, and LOQ can be visualized as a decision workflow. The following diagram guides the user from sample analysis to the final conclusion about detection and quantification.

Diagram 1: Decision workflow for interpreting results against LoB, LOD, and LOQ.

Standard Methodologies for Determination

Regulatory bodies like the International Council for Harmonisation (ICH) provide guidelines for determining LOD and LOQ, offering several accepted approaches [7] [4]. The choice of method often depends on the nature of the analytical technique and the available data.

Visual Evaluation

The visual evaluation method is a direct, non-instrumental approach. It involves analyzing samples with known, decreasing concentrations of the analyte and determining the lowest level at which the analyte can be seen to be present (for LOD) or quantified (for LOQ) [4]. For example, in a titration, the LOQ might be the concentration at which a color change is first consistently observed [4]. While simple, this method is subjective and is generally considered less rigorous than instrumental approaches.

Signal-to-Noise Ratio (S/N)

This method is commonly applied in techniques that produce a chromatographic or spectroscopic baseline, such as HPLC. The noise is the baseline fluctuation, and the signal is the height of the analyte peak [7] [4]. The LOD is generally defined as a signal-to-noise ratio of 3:1, while the LOQ is defined as a ratio of 10:1 [4] [8]. This method is practical and straightforward but requires a stable baseline for accurate assessment.

Standard Deviation of the Blank and the Calibration Curve Slope

This is a statistically robust method endorsed by ICH guidelines [7] [4]. It uses the standard deviation of the response (σ) and the slope (S) of the analytical calibration curve.

The standard deviation (σ) can be determined in two key ways:

- Standard Deviation of the Blank: Measuring multiple blank samples and calculating the standard deviation of their analytical response [4].

- Standard Error of the Regression: Using the standard error (Sy/x) of the y-intercept or the residual standard deviation from a linear regression analysis of a calibration curve prepared with samples in the low concentration range [7]. This is often the simplest approach, as regression statistics are readily generated by most data analysis software.

Table 2: Overview of Methods for Determining LOD and LOQ

| Method | Principle | Typical Application | Advantages | Limitations |

|---|---|---|---|---|

| Visual Evaluation | Direct observation of analyte response (e.g., color change) [4]. | Non-instrumental methods (e.g., limit tests, titration). | Simple, no specialized equipment needed. | Subjective, less rigorous. |

| Signal-to-Noise (S/N) | Comparison of analyte signal height to baseline noise [7] [4]. | Chromatography (HPLC, GC), spectroscopy. | Intuitive, directly uses instrument output. | Requires a stable, well-defined baseline. |

| Standard Deviation & Slope | Uses statistical variation (σ) and analytical sensitivity (S) from calibration data [7] [4]. | Most instrumental techniques (HPLC, electrochemical assays). | Statistically robust, widely accepted by regulators. | Requires generation of a calibration curve. |

Experimental Protocol: Determining LOD/LOQ via Calibration Curve

For researchers in electrochemical assay development, the calibration curve method is often the most appropriate. The following workflow details the key steps for this protocol.

Diagram 2: Workflow for determining LOD and LOQ using the calibration curve method.

- Preparation of Calibration Standards: Prepare a series of standard solutions at low concentrations, typically in the range where detection and quantification limits are expected. The matrix of these standards should, as closely as possible, match that of the real samples (e.g., buffer, serum) [7].

- Analysis and Signal Recording: Analyze each calibration standard multiple times (e.g., n=3-5) using the electrochemical method (e.g., Differential Pulse Voltammetry, Electrochemical Impedance Spectroscopy). Record the analytical signal (e.g., peak current, charge-transfer resistance) for each measurement [5].

- Linear Regression Analysis: Plot the mean analytical signal (y-axis) against the concentration of the standard (x-axis). Perform a linear regression analysis on the data to obtain the calibration curve. The data system software (e.g., Microsoft Excel, specialized instrument software) will provide a regression report [7].

- Extraction of Parameters: From the linear regression report, extract two key parameters:

- S (Slope): The slope of the calibration curve, representing the sensitivity of the assay.

- σ (Standard Deviation): The standard error of the regression (often denoted as S_y/x), which represents the standard deviation of the vertical distances of the points from the regression line [7].

- Calculation: Apply the ICH formulas.

- Experimental Verification: The calculated LOD and LOQ values are estimates and must be validated experimentally. Prepare and analyze at least six independent samples at the calculated LOD and LOQ concentrations. For the LOD, the analyte should be detected in nearly all replicates. For the LOQ, the measured concentrations should demonstrate acceptable precision (e.g., %CV ≤ 20%) and accuracy (e.g., bias within ±20%) [7]. If these performance criteria are not met, the estimates must be revised using a higher concentration.

The Scientist's Toolkit: Essential Reagents and Materials

The successful determination of LOD and LOQ in electrochemical assay research relies on a set of essential materials and reagents. The following table details key items and their functions in the experimental process.

Table 3: Essential Research Reagent Solutions for LOD/LOQ Determination in Electrochemical Assays

| Item | Function in Experiment | Specific Application Example |

|---|---|---|

| High-Purity Analyte Standard | Serves as the reference material for preparing known concentrations for calibration standards and spiked samples [7]. | Quantifying a specific drug metabolite in serum. |

| Blank Matrix | Provides the background in which standards are prepared, crucial for accounting for matrix effects that can influence the signal [1]. | Phosphate buffer or artificial serum for preparing calibration curves. |

| Electrolyte (Supporting Electrolyte) | Carries current in the electrochemical cell, minimizes solution resistance (Rs), and controls the ionic strength and pH of the environment [5]. | Using H₂SO₄ solution for studies on Pt electrode electrocatalysis [9]. |

| Screen-Printed Electrodes (SPEs) | Disposable, miniaturized working electrodes that offer reproducibility, ease of use, and are ideal for point-of-care device development [5]. | A single-use biosensor for detecting a cardiac biomarker in blood. |

| Redox Probe | A well-characterized molecule used to characterize electrode performance and surface modification. | Using potassium ferricyanide to validate the functionality of a modified electrode. |

| Bioreceptor Molecules | Provides the high specificity of the biosensor by binding selectively to the target analyte [5]. | Antibodies, aptamers, or enzymes immobilized on the electrode surface. |

The rigorous determination of the Limit of Detection (LOD) and Limit of Quantification (LOQ) is not a mere procedural formality but a cornerstone of reliable analytical science, especially in fields like electrochemical biosensing and pharmaceutical research. As detailed in this guide, these parameters form a hierarchy of confidence: the LOD provides a statistical basis for claiming an analyte is "present," while the LOQ defines the threshold for trustworthy measurement. Adhering to standardized protocols from organizations like CLSI and ICH ensures that these limits are determined objectively and reproducibly [1] [7]. For researchers developing the next generation of diagnostic tools, a deep understanding of LOD and LOQ is indispensable for validating method sensitivity, demonstrating fitness for purpose, and ultimately, for generating data that can confidently support critical decisions in drug development and clinical diagnostics.

In the rigorous world of analytical science and drug development, the ability to reliably detect and quantify trace levels of target substances forms the cornerstone of robust method validation. Among the various Analytical Figures of Merit (AFOM), the Limit of Detection (LOD) and Limit of Quantification (LOQ) are paramount, characterizing the fundamental capability of any analytical procedure [10]. The LOD is defined as the lowest concentration of an analyte that can be reliably distinguished from a blank sample, but not necessarily quantified as an exact value [1] [4]. It represents the threshold for detection feasibility. The LOQ, a higher concentration, is the lowest level at which an analyte can not only be detected but also quantified with stated, acceptable levels of precision (bias and imprecision) [1]. Essentially, the LOD answers the question "Is it there?" while the LOQ answers "How much is there?" with confidence.

These parameters are not merely academic exercises; they are critical for ensuring that analytical methods are "fit for purpose," determining whether a protocol is applicable for a given chemical system according to the expected analyte concentration in samples [10]. In regulated environments like pharmaceutical development, demonstrating control over these limits is non-negotiable. As technological advances push detection capabilities lower, international standards have become more rigorous, making the correct calculation and reporting of LOD and LOQ a crucial task during method development and validation [10].

Theoretical Foundations and Calculation Methods

The Statistical Basis of LOD and LOQ

The determination of LOD and LOQ is rooted in statistical principles that account for the signals generated by both blank and low-concentration samples. The fundamental concept involves three key limits defined by organizations like the Clinical and Laboratory Standards Institute (CLSI) in its EP17 guideline [1]:

- Limit of Blank (LoB): The highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested. It is calculated to account for 95% of blank values, meaning 5% of blank measurements may falsely appear to contain analyte (a Type I or α error) [1].

- Limit of Detection (LOD): The lowest analyte concentration that can be reliably distinguished from the LoB. It is determined using both the measured LoB and test replicates of a sample containing a low concentration of the analyte [1].

- Limit of Quantitation (LOQ): The lowest concentration at which the analyte can be quantified with acceptable precision and bias, meeting predefined goals for total error [1].

The relationship between these three limits is hierarchical, with LoB < LOD ≤ LOQ. The following diagram illustrates how these limits interact statistically and their relationship to blank and low-concentration sample measurements.

Standard Calculation Approaches

Several recognized approaches exist for calculating LOD and LOQ, with the choice of method often depending on the analytical technique, regulatory requirements, and the nature of the sample matrix. The most common calculation methods are summarized in the table below.

Table 1: Common Methods for Calculating LOD and LOQ

| Method | Basis | LOD Calculation | LOQ Calculation | Typical Application |

|---|---|---|---|---|

| Signal-to-Noise (S/N) [4] | Comparison of analyte signal to baseline noise | S/N ≈ 3:1 | S/N ≈ 10:1 | Chromatographic methods (HPLC, GC) |

| Standard Deviation of Blank & Slope [4] | Uses standard deviation (σ) of blank and calibration curve slope (S) | 3.3 × σ/S | 10 × σ/S | General instrumental methods |

| Standard Deviation of Low-Concentration Sample [1] | Uses LoB and standard deviation of low-concentration sample | LoB + 1.645(SDlow concentration sample) | ≥ LOD, meets precision goals | CLSI EP17 guideline for clinical assays |

| IUPAC/Classical Method [11] | Based on standard deviation of blank (sB) and calibration slope (m) | 3 × sB/m | 10 × sB/m | Fundamental research, spectroscopy |

| Propagation of Errors [11] | Accounts for uncertainty in calibration slope and intercept | Complex, includes sm and si terms | Complex, includes sm and si terms | High-precision requirements |

A critical aspect often overlooked is that LOD values should be reported to one significant digit only due to the inherent 33-50% relative variance in measurements where the signal is only two or three times the instrumental noise [11]. Reporting more precise LOD values misrepresents the actual certainty of the measurement.

Experimental Protocols for LOD/LOQ Determination in Electrochemical Assays

General Workflow for Method Validation

Establishing reliable LOD and LOQ values requires a systematic experimental approach. The following workflow, adapted from tutorial literature on computing these limits for complex samples, provides a robust framework [10]:

- Define the Analytical Problem: Identify the analyte, matrix, and required detection capability.

- Select an Appropriate Blank: For complex matrices, this may involve using analyte-free matrix or a surrogate that closely mimics the sample.

- Preliminary Estimation: Use the signal-to-noise approach to estimate the range of concentrations for LOD/LOQ.

- Acquire Experimental Data: Measure multiple blank samples and low-concentration standards.

- Calculate LoB, LOD, and LOQ: Apply the chosen statistical approach consistently.

- Verification: Confirm that samples at the calculated LOD and LOQ concentrations meet the defined statistical criteria for detection and quantification.

Case Study: Electrochemical Sensing of NADH for Anticancer Drug Screening

A recent case study on monitoring Lactate Dehydrogenase (LDH) activity through amperometric detection of NADH provides an excellent example of LOD/LOQ determination in electrochemical assays [12]. The experimental protocol can be summarized as follows:

- Objective: Develop an electrochemical alternative to UV-Vis spectroscopy for monitoring LDH activity to screen potential anticancer drugs.

- Sensor Platform: Titanium-modified glassy carbon electrode as working electrode in a standard three-electrode electrochemical cell.

- Measurement Technique: Chronoamperometry at a fixed potential of 0.66 V vs. reference.

Procedure:

- Prepare NADH standard solutions across a concentration range relevant to the enzymatic reaction.

- Immobilize LDH enzyme on functionalized mesoporous silica to create a biosensor.

- Measure chronoamperometric response for each standard concentration.

- Construct a calibration curve of current response versus NADH concentration.

- Determine LOD and LOQ from the calibration data using established statistical methods.

Key Results: The method achieved a sensitivity of 0.614 μA cm⁻² mM⁻¹, with an LOD of 27.58 μM and LOQ of 91.92 μM [12]. The authors noted that while the LOD might benefit from further optimization, the electrochemical approach offered advantages over optical methods in selectivity and resistance to interference.

Advanced Protocol: AI-Enhanced Electrochemical Sensor for Multiplexed Detection

Cutting-edge research now incorporates Artificial Intelligence (AI) to overcome traditional limitations in electrochemical detection. A 2025 study demonstrated an AI-assisted approach for detecting multiple quinone-family compounds in mixture using cyclic voltammetry and square wave voltammetry [13]. The experimental workflow illustrates how modern techniques push detection limits lower:

- Sensor Fabrication: Custom screen-printed electrodes (SPEs) with graphite ink working/counter electrodes and Ag/AgCl reference electrode.

- AI Integration: A machine learning model based on Gramian Angular Field (GAF) transformation was developed to resolve overlapping voltammetric peaks from multiple electroactive species with similar redox potentials.

- Analysis: The system was tested with individual solutions and mixtures of hydroquinone, benzoquinone, catechol, and ferrocyanide in both deionized and tap water.

- Performance: The AI-assisted square wave voltammetry approach achieved significantly lower LODs (0.8-4.2 μM in tap water) compared to conventional cyclic voltammetry (8.8-14.6 μM in tap water), demonstrating the power of advanced data processing to enhance sensor capabilities [13].

Comparative Analysis of Electrochemical Sensing Platforms

Electrochemical sensors have gained prominence for industrial and clinical applications due to their high sensitivity, rapid analysis, cost-effectiveness, and potential for miniaturization [14]. The table below compares the performance of different electrochemical sensing platforms, highlighting their achieved LOD and LOQ values for various applications.

Table 2: Comparison of Electrochemical Sensing Platforms and Their Performance

| Sensor Platform / Application | Target Analyte | Technique | LOD | LOQ | Reference |

|---|---|---|---|---|---|

| Ti-modified GCE / Anticancer drug screening | NADH | Chronoamperometry | 27.58 μM | 91.92 μM | [12] |

| Bare SPE (in tap water) / Quinones | Hydroquinone | Square Wave Voltammetry | 1.3 μM | 4.3 μM | [13] |

| Bare SPE (in tap water) / Quinones | Catechol | Square Wave Voltammetry | 4.2 μM | 13.6 μM | [13] |

| Au-GQD modified paper electrode / Prostate cancer | PCA3 DNA | Cyclic Voltammetry | 1.37 fM | 4.08 fM | [15] |

| Au-GQD modified paper electrode / Prostate cancer | PCA3 DNA | EIS | 1.41 fM | 4.27 fM | [15] |

The exceptional sensitivity (femtomolar LOD) achieved by the Au-GQD modified paper electrode for DNA detection highlights how nanomaterial integration can dramatically enhance electrochemical sensor performance [15]. Such advancements are particularly valuable for detecting low-abundance biomarkers in clinical diagnostics.

Essential Reagents and Materials for Electrochemical Assay Development

The development and validation of robust electrochemical methods require specific reagents and materials. The following table details key components used in the featured experiments and their critical functions.

Table 3: Research Reagent Solutions for Electrochemical Assay Development

| Reagent / Material | Function / Application | Example from Literature |

|---|---|---|

| Screen-Printed Electrodes (SPEs) | Disposable, cost-effective sensor substrates; enable decentralized testing | Graphite ink WE/CE, Ag/AgCl RE for quinone detection [13] |

| Glassy Carbon Electrode (GCE) | Versatile working electrode material; can be modified for enhanced performance | Ti-modified GCE for NADH detection [12] |

| Nanomaterial Modifiers | Enhance surface area, electrocatalysis, and sensitivity | Au-Graphene Quantum Dots (Au-GQD) for DNA sensing [15] |

| Redox Probes | Provide reference signals for method validation and characterization | Ferri/Ferrocyanide couple in EIS and CV [13] [15] |

| Enzyme Immobilization Matrices | Support bio-recognition elements on electrode surfaces | Functionalized mesoporous silica for LDH immobilization [12] |

| Buffer Systems | Maintain consistent pH and ionic strength for stable electrochemical measurements | PBS ferri/ferro cyanide (0.1 M, pH 7.0) for EIS characterization [15] |

The determination of LOD and LOQ is not a mere procedural formality but a fundamental aspect of demonstrating methodological competence and reliability. As the case studies in electrochemical sensing illustrate, properly validated methods with well-characterized limits form the foundation for credible scientific research and effective drug development. The ongoing integration of advanced materials like nanomaterials and sophisticated data processing techniques like artificial intelligence continues to push these limits lower, expanding the frontiers of what is detectable and quantifiable. For researchers and drug development professionals, a thorough understanding and rigorous application of LOD and LOQ principles ensure that analytical methods are truly "fit for purpose," providing the reliable data necessary for critical decisions in both the laboratory and the clinic.

In the field of analytical chemistry and biosensing, the Limit of Detection (LOD) and Limit of Quantification (LOQ) serve as fundamental performance parameters that define the operational boundaries of any analytical method. The LOD represents the lowest analyte concentration that can be reliably distinguished from analytical noise, while the LOQ defines the lowest concentration that can be quantitatively measured with acceptable precision and accuracy [16] [1]. These parameters are particularly crucial in electrochemical biosensing, where researchers and drug development professionals require robust methods for detecting biomarkers, drugs, and contaminants at increasingly lower concentrations.

Despite universal recognition of their importance, no single international standard governs the determination of LOD and LOQ. Prominent organizations including the International Union of Pure and Applied Chemistry (IUPAC), the United States Environmental Protection Agency (USEPA), and the European-based EURACHEM have established related but distinct approaches for characterizing these fundamental method performance characteristics [16]. This divergence has created a challenging landscape for researchers who must navigate different validation requirements across regulatory jurisdictions and scientific disciplines.

This comparison guide objectively examines the methodologies prescribed by these leading international guidelines, with a specific focus on their application to electrochemical assays. By synthesizing current research and experimental data, we provide a structured framework to help researchers select appropriate validation approaches and interpret results across different regulatory contexts.

Theoretical Foundations and Definitions

Core Concepts and Terminology

Understanding the conceptual framework underlying detection and quantification limits is essential before comparing methodological approaches. The Limit of Blank (LoB) represents the highest apparent analyte concentration expected when replicates of a blank sample (containing no analyte) are tested. Statistically, the LoB is defined as mean_blank + 1.645(SD_blank), which establishes the threshold above which an observed signal has a 95% probability of being different from the blank [1].

The Limit of Detection (LOD) is the lowest analyte concentration that can be reliably distinguished from the LoB with specified confidence. According to Clinical and Laboratory Standards Institute (CLSI) EP17 guidelines, LOD is calculated as LOD = LoB + 1.645(SD_low concentration sample), ensuring that 95% of measurements at this concentration will exceed the LoB [1]. The Limit of Quantification (LOQ) extends beyond mere detection to represent the lowest concentration at which the analyte can be measured with predefined goals for both bias and imprecision [1].

The Harmonization Challenge

Multiple designations exist for these parameters across different guidelines, including "limit of determination," "limit of reporting," and "limit of application" [16]. This terminology variation reflects deeper methodological differences in how these fundamental parameters are established and validated. The absence of a universal protocol has led to varied approaches among researchers, making direct comparison of method performance challenging across studies [16].

Table 1: Fundamental Definitions of Analytical Sensitivity Parameters

| Parameter | Definition | Key Statistical Basis |

|---|---|---|

| Limit of Blank (LoB) | Highest apparent analyte concentration expected from a blank sample | mean_blank + 1.645(SD_blank) |

| Limit of Detection (LOD) | Lowest concentration reliably distinguished from LoB | LoB + 1.645(SD_low concentration sample) |

| Limit of Quantification (LOQ) | Lowest concentration measurable with acceptable precision and accuracy | Predefined targets for bias and imprecision must be met |

Comparative Analysis of International Guidelines

IUPAC Approaches

The International Union of Pure and Applied Chemistry (IUPAC) provides foundational statistical approaches for determining LOD and LOQ, emphasizing calibration-based methods and signal-to-noise ratios. IUPAC-endorsed methods typically calculate LOD as 3.3σ/S and LOQ as 10σ/S, where σ represents the standard deviation of the response and S represents the slope of the calibration curve [17]. This approach is widely cited in academic research but has been criticized for potentially providing underestimated values in some practical applications [16].

USEPA Methodologies

The United States Environmental Protection Agency (USEPA) emphasizes empirical determination of method detection limits (MDLs) through extensive replication at low concentrations. The standard USEPA approach involves analyzing at least seven replicates of a sample prepared at a low concentration and calculating MDL as t_(n-1,1-α=0.99) × SD, where t is the Student's t-value for a 99% confidence level with n-1 degrees of freedom [1]. This procedure places strong emphasis on matrix effects and requires verification that the calculated MDL provides reliable detection in real sample matrices.

EURACHEM Guidelines

EURACHEM guidelines take a distinct approach by focusing on measurement uncertainty throughout the analytical range. The uncertainty profile method, aligned with EURACHEM principles, is a graphical validation tool that combines uncertainty intervals with acceptability limits [16]. This method computes β-content tolerance intervals to establish the concentration range where measurement uncertainty remains within acceptable boundaries. The LOQ is determined as the point where the uncertainty profile intersects with acceptability limits, providing a practical assessment of the method's quantitative range [16].

Table 2: Comparison of International Guidelines for LOD/LOQ Determination

| Guideline | Primary Approach | Key Equations/Parameters | Typical Application Context |

|---|---|---|---|

| IUPAC | Calibration curve & signal-to-noise | LOD = 3.3σ/S, LOQ = 10σ/S |

Fundamental research, academic studies |

| USEPA | Empirical replication | MDL = t_(n-1,0.99) × SD |

Environmental monitoring, regulatory compliance |

| EURACHEM | Measurement uncertainty profiles | β-content tolerance intervals, uncertainty intervals | Pharmaceutical analysis, quality control |

Experimental Protocols for LOD and LOQ Determination

Calibration Curve Method (IUPAC-Aligned)

The calibration curve approach requires preparing a series of standard solutions across the expected concentration range, including concentrations near the anticipated detection limit. Following analysis, the standard deviation of the response (σ) is determined from the y-intercept variability or from replicate measurements of low-concentration standards. The slope (S) of the calibration curve is calculated using linear regression. LOD and LOQ are then derived as 3.3σ/S and 10σ/S, respectively [17]. This method is computationally straightforward but may not adequately account for matrix effects in complex samples.

Signal-to-Noise Ratio Method

Primarily applied to chromatographic or electrochemical techniques displaying baseline noise, the signal-to-noise method determines LOD as the concentration producing a signal 3 times the noise level, while LOQ corresponds to a signal 10 times the noise level [17]. This approach provides practical, instrument-based estimates but may be influenced by subjective assessment of noise magnitude and requires verification with actual samples.

Empirical Method (USEPA-Aligned)

The empirical approach requires analyzing numerous replicates (typically 20-60) of both blank samples and samples containing low analyte concentrations [1]. The mean and standard deviation are calculated for both sample sets, followed by computation of LoB as mean_blank + 1.645(SD_blank). The LOD is then determined as LoB + 1.645(SD_low concentration sample) [1]. This method demands more extensive experimental work but provides statistically robust estimates that account for matrix effects.

Uncertainty Profile Method (EURACHEM-Aligned)

The uncertainty profile approach begins with computing β-content tolerance intervals for each concentration level using the formula: Ȳ ± k_tol × σ̂_m, where Ȳ is the mean result, ktol is the tolerance factor, and σ̂m is the estimate of reproducibility variance [16]. Measurement uncertainty u(Y) is then calculated as (U-L)/(2t(ν)), where U and L represent the upper and lower tolerance limits, and t(ν) is the Student's t quantile [16]. The uncertainty profile is constructed by plotting |Ȳ ± k×u(Y)| against concentration and comparing to acceptability limits (λ). The LOQ is identified as the concentration where the uncertainty profile intersects the acceptability limit [16].

Practical Applications in Electrochemical Assays

LOD/LOQ Considerations in Electrochemical Biosensor Development

Electrochemical biosensors represent a rapidly advancing field where LOD and LOQ determination is critical for applications in clinical diagnostics, environmental monitoring, and pharmaceutical analysis. These sensors typically consist of three main components: a biometric element (e.g., enzyme, antibody), a signal converter, and a data analysis module [18]. The configuration and materials of the working electrode significantly impact sensitivity parameters, with gold electrodes of sufficient thickness (e.g., 3.0 μm) demonstrating superior stability and performance compared to thinner or copper-based alternatives [19].

Nanomaterial integration has dramatically enhanced electrochemical biosensor capabilities. Zinc oxide nanorods (ZnO NRs) and ZnO NRs:reduced graphene oxide (RGO) composites provide enhanced pathways for antibody immobilization and electron transfer, enabling detection of biomarkers like 8-hydroxy-2'-deoxyguanosine (8-OHdG) in the range of 0.001–5.00 ng·mL⁻¹ [19]. Such enhancements highlight how proper sensor design coupled with appropriate LOD/LOQ validation methods can achieve clinically relevant detection limits.

Comparative Performance Across Detection Methods

Different electrochemical detection techniques exhibit varying inherent sensitivities that influence LOD and LOQ values. Voltammetric methods including cyclic voltammetry (CV), differential-pulse voltammetry (DPV), and square-wave voltammetry (SWV) offer different sensitivity characteristics. For hydrazine detection, linear-sweep voltammetry (LSV) demonstrated a LOD of 0.164 ± 0.013 μM, while CV provided a slightly improved LOD of 0.143 ± 0.011 μM [18]. Similarly, SWV has enabled simultaneous detection of neurotransmitters norepinephrine and dopamine with LODs of 0.26 μM and 0.34 μM, respectively [18].

Table 3: LOD/LOQ Values from Experimental Studies Across Methodologies

| Analytical Method | Analyte | Matrix | LOD | LOQ | Reference Approach |

|---|---|---|---|---|---|

| HPLC-UV | Carbamazepine | Standard solution | Variable by method | Variable by method | Signal-to-noise vs. SDR [17] |

| HPLC-UV | Phenytoin | Standard solution | Variable by method | Variable by method | Signal-to-noise vs. SDR [17] |

| HPLC | Sotalol | Plasma | Underestimated (classical) | Underestimated (classical) | Classical vs. graphical strategies [16] |

| Electrochemical (LSV) | Hydrazine | Standard solution | 0.164 ± 0.013 μM | Not specified | Linear sweep voltammetry [18] |

| Electrochemical (CV) | Hydrazine | Standard solution | 0.143 ± 0.011 μM | Not specified | Cyclic voltammetry [18] |

| Electrochemical (SWV) | Norepinephrine | Standard solution | 0.26 μM | Not specified | Square-wave voltammetry [18] |

| Electrochemical (SWV) | Dopamine | Standard solution | 0.34 μM | Not specified | Square-wave voltammetry [18] |

| Electrochemical immunosensor | 8-OHdG | Urine | 0.001 ng·mL⁻¹ | Within 0.001–5.00 ng·mL⁻¹ | ZnO NRs-based sensor [19] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of LOD and LOQ determination methods requires specific materials and reagents tailored to the analytical technique and guideline being followed.

Table 4: Essential Research Reagents and Materials for LOD/LOQ Studies

| Reagent/Material | Function/Purpose | Application Context |

|---|---|---|

| High-purity analyte standards | Preparation of calibration standards and quality controls | All analytical methods |

| Blank matrix samples | Determination of Limit of Blank (LoB) | CLSI EP17, USEPA methods |

| Low-concentration QC samples | Empirical determination of LOD | USEPA, EURACHEM methods |

| Electrochemical mediators | Facilitate electron transfer between enzyme and electrode | Electrochemical biosensors [20] |

| ZnO nanorods & graphene composites | Enhance electrode surface area and electron transfer | Electrochemical sensor optimization [19] |

| Reference electrode materials | Provide stable reference potential | Electrochemical methods [19] |

| Stationary phases & columns | Compound separation | HPLC-based methods [16] [17] [21] |

| Mobile phase components | Elute analytes from column | HPLC-based methods [16] |

Comparative studies consistently demonstrate that different LOD/LOQ determination methods yield significantly different values for the same analytical method. Research has shown that classical strategy based on statistical concepts provides underestimated values of LOD and LOQ, while graphical tools like uncertainty and accuracy profiles offer more realistic assessments [16]. Similarly, the signal-to-noise ratio method typically provides lower LOD and LOQ values compared to approaches based on standard deviation of the response and slope of the calibration curve [17].

The selection of an appropriate LOD/LOQ determination method should consider the intended application of the analytical method, regulatory requirements, and the nature of the sample matrix. For electrochemical biosensors intended for clinical use, approaches that incorporate matrix effects and measurement uncertainty (e.g., EURACHEM-aligned methods) provide more realistic performance assessments. The convergence of LOD and LOQ values obtained from uncertainty and accuracy profiles suggests these graphical methods offer reliable alternatives to classical approaches [16].

As electrochemical technologies advance toward greater sensitivity and miniaturization, appropriate validation methodologies will become increasingly important for translating research innovations into clinically and commercially viable applications. By understanding the theoretical foundations and practical implications of different international guidelines, researchers can make informed decisions about method validation strategies that ensure reliable, defensible analytical results.

In the rigorous world of analytical chemistry and assay development, particularly within pharmaceutical and clinical research, understanding the fundamental performance parameters of a detection method is paramount. Three concepts form the cornerstone of this understanding: sensitivity, noise, and the detection limit. While often mentioned together, their distinct meanings and intricate relationship are frequently misunderstood. Sensitivity, defined as the ability of an analytical method to produce a signal change for a given change in analyte concentration, is often mistakenly used interchangeably with the detection limit. The limit of detection (LOD), conversely, is the lowest concentration of an analyte that can be reliably distinguished from a blank sample with a stated confidence level. The critical link between them is noise—the random fluctuation in the analytical signal that ultimately determines the smallest detectable concentration.

This guide explores the fundamental link between these parameters, framing the discussion within the context of electrochemical assays, a prominent technology in drug development and clinical diagnostics. We will objectively compare how different analytical techniques and calculation approaches influence the reported LOD and limit of quantification (LOQ), providing researchers with a clear framework for evaluating and comparing assay performance. As [22] succinctly states, "Sensitivity ≠ detection limit," a premise that forms the thesis of this exploration. The detection limit is determined not by sensitivity alone, but by the signal-to-noise ratio (SNR), where a signal must be significantly larger than the noise level to be detected with confidence [22]. This relationship is universal, impacting technologies from quartz crystal microbalances (QCM) to HPLC and electrochemical sensors.

Theoretical Framework: The Sensitivity-Noise-LOD Relationship

Dissecting the Terminology

To properly compare analytical methods, a precise understanding of terminology is essential. The following definitions are based on established clinical and analytical guidelines [2] [1]:

- Limit of Blank (LoB): The highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested. It is calculated as

LoB = mean_blank + 1.645(SD_blank), assuming a Gaussian distribution where 95% of blank values fall below this limit [1]. - Limit of Detection (LOD or LoD): The lowest analyte concentration that can be reliably distinguished from the LoB. It requires a signal that is statistically unlikely to be produced by a blank sample. According to CLSI EP17 guidelines, it is determined using both the LoB and test replicates of a low-concentration sample:

LoD = LoB + 1.645(SD_low concentration sample)[1]. This ensures that 95% of measurements at the LOD will exceed the LoB, minimizing false negatives. - Limit of Quantification (LOQ or LoQ): The lowest concentration at which the analyte can not only be detected but also quantified with acceptable precision and accuracy (defined by predetermined goals for bias and imprecision) [1]. The LOQ is always greater than or equal to the LOD.

- Sensitivity: In the context of calibration, sensitivity refers to the slope of the calibration curve—the change in instrument response per unit change in analyte concentration [22] [2]. It is a conversion factor and should not be confused with the LOD.

- Noise: The random fluctuation in the analytical signal observed even in the absence of the analyte. It is the key factor that limits the detection of small signals.

The Signal-to-Noise Ratio: The Central Connector

The conceptual link between sensitivity, noise, and the LOD is powerfully illustrated by the Signal-to-Noise Ratio (SNR). A high sensitivity is beneficial only if it is not accompanied by a proportional increase in noise.

Diagram 1: The core relationship between sensitivity, noise, and LOD. The LOD is determined by the Signal-to-Noise Ratio (SNR), which is influenced by both sensitivity and noise.

As shown in Diagram 1, the SNR is the mediator. A method with high sensitivity will produce a larger signal for a given mass or concentration change. However, if the noise level is also high, the useful signal (the part significantly larger than the noise) may not improve. As [22] explains with an analogy, a thermometer displaying readings in Fahrenheit (larger numbers) is not inherently better than one displaying Celsius; what matters is the spread or noise in the measurements. Therefore, "the detection limit is determined by the signal-to-noise ratio, SNR. Noise will be present in all measurements, and it will prevent signals smaller than or comparable to the noise level from being confidently measured" [22].

This principle is practically demonstrated in QCM instruments, where sensors with higher fundamental resonant frequency offer higher sensitivity but often exhibit proportionally higher noise levels. The result is that the SNR, and thus the effective detection limit, can remain unchanged between instruments with different sensitivity specifications [22].

Comparative Analysis of LOD/LOQ Across Analytical Techniques

Electrochemical vs. Spectroscopic Methods: A Case Study

Electrochemical methods are gaining traction as promising alternatives to traditional optical techniques like UV-Vis spectroscopy due to their potential for higher sensitivity, portability, and lower cost. A direct comparative case study on Lactate Dehydrogenase (LDH) activity monitoring illustrates this well.

Table 1: Comparison of Electrochemical and UV-Vis Methods for LDH/NADH Detection

| Parameter | Electrochemical (Amperometric) | UV-Vis Spectroscopy | Implications for Assay Performance |

|---|---|---|---|

| Detection Principle | Amperometric detection of NADH at 0.66 V [12] | Absorbance measurement of NADH [12] | Electrochemical offers higher selectivity in complex matrices. |

| LOD for NADH | 27.58 μM [12] | Not specified, but implied to be higher than the electrochemical method [12] | Lower LOD improves ability to detect low analyte levels. |

| LOQ for NADH | 91.92 μM [12] | Not specified | Defines the lower limit for precise quantification. |

| Sensitivity | 0.614 μA cm⁻² mM⁻¹ [12] | Not specified | Steeper calibration curve slope. |

| Key Advantage | Higher selectivity and stability against interference [12] | Widely accessible instrumentation | Electrochemical is superior for complex samples like cell lysates. |

The study concluded that the electrochemical setup, using a Ti-modified glassy carbon electrode, provided higher selectivity and stability against interference from several compounds compared to the optical method, despite noting that the LOD could benefit from further optimization [12]. This demonstrates that raw sensitivity is not the only factor; resistance to matrix interference is a critical advantage for real-world applications like anticancer drug screening.

Variability in LOD/LOQ Calculation Methods

A significant challenge when comparing LOD values from different studies or product specifications is the lack of a universally mandated calculation method. The approach taken can significantly influence the reported limits, making direct comparisons misleading.

Table 2: Impact of Different LOD/LOQ Calculation Methods on Reported Values

| Analytical Method | Comparison Context | Variability in LOD/LOQ Findings | Key Takeaway |

|---|---|---|---|

| HPLC-UV [17] | Signal-to-Noise (S/N) vs. Standard Deviation of Response (SDR) | S/N method yielded the lowest LOD/LOQ values for carbamazepine and phenytoin. SDR method resulted in the highest values. | Methodology drastically affects reported sensitivity. Following standardized criteria (e.g., FDA) is crucial. |

| Electronic Noses (eNoses) [23] | PCA vs. PLSR vs. PCR multivariate models | LOD estimates for beer volatiles (e.g., diacetyl) differed by a factor of up to eight between methods. | For multidimensional data, the data processing model is a major variable in LOD determination. |

| Clinical Assays [1] | Traditional (Blank + 2SD) vs. CLSI EP17 (LoB + 1.645 SD) | The EP17 protocol is empirically more robust as it uses low-concentration samples, proving distinguishability from the blank. | The traditional method "defines only the ability to measure nothing" [1], underscoring the need for rigorous standards. |

This variability highlights the importance for researchers to not only report the LOD/LOQ values but also to explicitly state the calculation methodology and the number of replicates used. As shown in Table 2, an LOD calculated from the standard deviation of a blank is not equivalent to one derived from a calibration curve or a multivariate model.

Experimental Protocols for LOD/LOQ Determination

Standard Protocol for Electrochemical LOD/LOQ Estimation

For researchers developing electrochemical assays, the following workflow, synthesized from the cited literature, provides a robust path for determining LOD and LOQ.

Diagram 2: A generalized experimental workflow for determining LoB, LoD, and LoQ in analytical assays.

- Blank Measurement: Analyze multiple replicates (n ≥ 20 for verification; n=60 for establishment) of a blank solution containing all components except the analyte [1]. Record the analytical signal (e.g., current in amperometry, peak height in DPV).

- Calibration Curve Construction: Prepare and analyze a series of standard solutions with known analyte concentrations across the expected range. This establishes the relationship between concentration and response (the sensitivity/slope) [2].

- Calculate Limit of Blank (LoB): Compute the LoB using the formula:

LoB = mean_blank + 1.645(SD_blank)[1]. This establishes the threshold above which a signal is considered non-blank. - Analyze Low-Concentration Sample: Prepare and analyze multiple replicates (n ≥ 20) of a sample with a concentration near the expected LOD.

- Calculate Limit of Detection (LOD):

- Empirical Method (per CLSI EP17): Use the data from the low-concentration sample to calculate

LOD = LoB + 1.645(SD_low_concentration_sample)[1]. Verify that no more than 5% of the measurements at this concentration fall below the LoB. - Calibration Curve Method: The LOD can also be estimated as

LOD = 3.3 * σ / S, where σ is the standard deviation of the blank response (or the y-intercept residuals of the regression line), and S is the slope of the calibration curve [2].

- Empirical Method (per CLSI EP17): Use the data from the low-concentration sample to calculate

- Establish Limit of Quantification (LOQ): The LOQ is the lowest concentration that can be measured with predefined precision and accuracy (e.g., ≤20% CV). It is typically calculated as

LOQ = 10 * σ / S[2]. Test replicates at this concentration to confirm that the bias and imprecision meet the predefined goals [1].

Case Study: LOD for an IL-6 Electrochemical Immunosensor

A 2025 study developing an electrochemical sensor for Interleukin-6 (IL-6) following spinal cord injury provides a specific example of a high-performance assay [24]. The sensor was constructed by modifying a platinum-carbon electrode with Prussian blue nanoparticles (PBNPs) and thionin acetate (TA), which provided a platform for immobilizing IL-6 antibodies.

- Detection Principle: The specific binding of IL-6 to the immobilized antibodies formed an insulating protein layer on the electrode surface, hindering electron transfer and causing a measurable change in the differential pulse voltammetry (DPV) signal [24].

- LOD Achievement: The researchers achieved an exceptionally low LOD of 5.4 pg mL⁻¹, which is crucial for detecting clinically relevant concentrations of the inflammatory cytokine.

- Specificity Testing: The sensor's performance was validated by testing against potential interferents, including Bovine Serum Albumin (BSA), interleukin-4 (IL-4), and glycine, confirming high specificity for IL-6 [24].

The Scientist's Toolkit: Essential Reagents and Materials

The performance of an assay is directly dependent on the quality and appropriateness of its components. Below is a list of key research reagents and materials commonly used in advanced electrochemical assays, based on the protocols discussed.

Table 3: Key Research Reagent Solutions for Electrochemical Assay Development

| Reagent/Material | Function in the Assay | Example from Literature |

|---|---|---|

| Boron-Doped Diamond (BDD) Electrode | An electrode material known for its wide potential window, low background current, and high chemical stability, ideal for detecting electroactive species. | Used for the detection of emerging contaminants (caffeine, paracetamol) due to its strong resolving power [25]. |

| Prussian Blue Nanoparticles (PBNPs) | An electrocatalytic material and endogenous redox probe used for signal generation and amplification in biosensors. | Served as an excellent electrocatalytic layer in the IL-6 immunosensor [24]. |

| Thionin Acetate (TA) | An electroactive dye that provides amino groups for covalent antibody immobilization and enhances electron transport via π-π stacking. | Used to amplify the electrochemical signal and provide binding sites for antibodies in the IL-6 sensor [24]. |

| EDC/NHS Crosslinker | A carbodiimide crosslinking chemistry used to activate carboxyl groups, facilitating covalent conjugation between antibodies and functionalized surfaces. | Employed to conjugate the IL-6 antibody to the amine-functionalized sensor surface [24]. |

| Nafion Solution | A perfluorosulfonated ionomer used to coat electrodes, providing selectivity by repelling negatively charged interferents (e.g., ascorbic acid, uric acid). | A common material in biosensor construction, though not explicitly mentioned in the cited papers, its function is analogous to the PBNPs/TA layer in providing selectivity. |

| Metal Oxide Semiconductors (MOS) | The sensitive layer in electronic nose (eNose) sensors; resistance changes upon exposure to volatile compounds. | Used in sensor arrays for detecting beer maturation volatiles like diacetyl [23]. |

The exploration confirms that sensitivity, noise, and detection limits are fundamentally linked through the signal-to-noise ratio. A high analytical sensitivity is a valuable asset, but its benefit is only fully realized when the noise level is effectively managed. The detection limit, therefore, is a measure of an assay's effective sensitivity under realistic operating conditions, not its theoretical potential.

For researchers and drug development professionals, this has critical implications:

- When comparing assays, the LOD/LOQ values are more meaningful than sensitivity specifications alone, but the method used to calculate these limits must be scrutinized.

- In assay development, effort should be directed not only at boosting the signal but also at minimizing sources of noise, such as improving electrode stability, optimizing buffer conditions, and using effective blocking agents to reduce non-specific binding.

- Electrochemical methods have demonstrated strong performance against traditional spectroscopic techniques, offering high selectivity, simplicity, and low cost, making them particularly suitable for decentralized clinical testing and point-of-care diagnostics [12] [24] [25].

Understanding the fundamental link between these parameters enables scientists to make informed decisions about method selection, critically evaluate analytical literature, and develop more robust and reliable assays for drug discovery and diagnostic applications.

In the field of analytical chemistry, particularly in the development of electrochemical assays for drug development, the accurate determination of the Limit of Detection (LOD) and Limit of Quantification (LOQ) is paramount. These parameters define the smallest concentration of an analyte that can be reliably detected and quantified, respectively, and are crucial for assessing the sensitivity and applicability of a bioanalytical method. The statistical parameters of blank signal, standard deviation, and signal-to-noise ratio form the foundational triad for calculating LOD and LOQ. This guide provides an objective comparison of different methodological approaches for determining these limits, supported by experimental data and detailed protocols from contemporary research.

Core Statistical Parameters and Their Definitions

Blank Signal

The blank signal (or blank response) is the measured signal value obtained when analyzing a sample that does not contain the target analyte. It represents the background noise or baseline of the analytical system. In the context of LOD/LOQ determination, a high blank signal can deteriorate the assay's capability by reducing the overall signal-to-noise ratio. Advanced sensing schemes specifically aim to suppress this blank peak current to improve sensitivity [26].

Standard Deviation

Standard deviation is a statistical measure of the dispersion or variability of a set of data points around their mean. A low standard deviation indicates that data points tend to be very close to the mean, while a high standard deviation indicates that the data are spread out over a wider range [27].

- Population Standard Deviation (σ): Used when data encompasses the entire population.

σ = √[ Σ(xi - μ)² / N ][27] [28] - Sample Standard Deviation (s): Used when data is from a sample of the population, providing an unbiased estimate.

s = √[ Σ(xi - x̄)² / (n - 1) ][29] [28]

In analytical chemistry, the standard deviation of the blank signal (σblank) is critically important, as it is used directly in classical formulas for LOD and LOQ [16].

Signal-to-Noise Ratio (SNR)

Signal-to-Noise Ratio (SNR or S/N) compares the level of a desired signal to the level of background noise. It is a key parameter for evaluating the performance and quality of analytical systems [30] [31].

- Power Ratio:

SNR = Psignal / Pnoise - Amplitude Ratio (for voltages):

SNR = (Asignal / Anoise)² - Decibel Scale:

SNRdB = 10 log10(Psignal / Pnoise)orSNRdB = 20 log10(Asignal / Anoise)[30]

For LOD/LOQ assessment via the S/N method, a signal-to-noise ratio of 3:1 is typically accepted for LOD, and 10:1 for LOQ [17].

Comparative Analysis of LOD and LOQ Determination Methods

Different approaches for calculating LOD and LOQ can yield significantly different results, impacting the reported sensitivity of a method. The following table summarizes the core characteristics of these approaches.

Table 1: Comparison of Major Approaches for LOD and LOQ Determination

| Methodology | Theoretical Basis | Reported Performance | Advantages | Limitations |

|---|---|---|---|---|

| Standard Deviation of Blank & Slope | LOD = 3.3σ / SLOQ = 10σ / SWhere σ is SD of blank, S is slope of calibration curve [16] | Considered a classical strategy; may provide underestimated values compared to graphical methods [16] | Simple to calculate with minimal data requirements. | Can underestimate true limits; does not account for all method error sources across the concentration range. |

| Signal-to-Noise Ratio (S/N) | LOD: S/N ≈ 3LOQ: S/N ≈ 10 [17] | In an HPLC-UV study, the S/N method provided the lowest LOD and LOQ values, indicating highest apparent sensitivity [17] | Intuitively linked to chromatographic performance; simple to implement directly from instrument data. | Requires a region where noise can be measured; can be instrument-specific. |

| Uncertainty Profile | A graphical tool based on β-content tolerance intervals and measurement uncertainty. LOQ is the lowest concentration where the uncertainty interval falls within acceptability limits (-λ, λ) [16] | Provides a relevant and realistic assessment; found to be more precise than classical methods, offering a reliable alternative [16] | Accounts for total method variability (repeatability, between-series variance); defines a full validity domain. | Computationally complex; requires a larger dataset from a validation study. |

| Accuracy Profile | A graphical approach based on total error (bias + standard deviation) and tolerance intervals [16] | Values for LOD and LOQ are in the same order of magnitude as those from the uncertainty profile [16] | Visually intuitive; considers both accuracy and precision to define the quantitation range. | Requires a comprehensive set of validation data. |

Table 2: Experimental LOD/LOQ Values from a Comparative HPLC Study of Carbamazepine and Phenytoin [17]

| Drug Compound | Calculation Method | Limit of Detection (LOD) | Limit of Quantification (LOQ) |

|---|---|---|---|

| Carbamazepine | Signal-to-Noise (S/N) | Lowest Value | Lowest Value |

| Standard Deviation of Response & Slope (SDR) | Highest Value | Highest Value | |

| Phenytoin | Signal-to-Noise (S/N) | Lowest Value | Lowest Value |

| Standard Deviation of Response & Slope (SDR) | Highest Value | Highest Value |

Detailed Experimental Protocol: An Electrochemical Aptasensor Case Study

The following detailed methodology is adapted from a proof-of-principle study for a blank peak current-suppressed electrochemical aptameric sensor, which achieved a detection limit of 10⁻¹⁰ M for adenosine [26].

Research Reagent Solutions and Materials

Table 3: Essential Materials and Reagents for the Electrochemical Aptasensor

| Item Name | Function / Role in the Experiment |

|---|---|

| Thiolated Aptamer Probe | The core recognition element, immobilized on the gold electrode surface. Its sequence is engineered to undergo conformational change upon target binding [26]. |

| Ferrocene (Fc) Monocarboxylic Acid | An electroactive label. It is conjugated to the aptamer and provides the measurable redox current signal [26]. |

| EcoRI Endonuclease | A restriction enzyme that acts as a "molecular scissors." It cleaves double-stranded DNA regions, serving as the key element for signal suppression in the absence of the target [26]. |

| Gold Electrode | The transducer platform. It is polished, cleaned, and used to self-assemble the thiolated aptamer monolayer [26]. |

| EDC & NHS | Coupling reagents (N-(3-dimethylaminopropyl)-N'-ethylcarbodiimide and N-Hydroxysuccinimide). They activate the carboxylic group of Fc for conjugation to the amine-modified end of the aptamer [26]. |

| Differential Pulse Voltammetry (DPV) | The electrochemical technique used to measure the Faradaic current from the Ferrocene label. Its high sensitivity makes it ideal for low-concentration detection [26]. |

Step-by-Step Workflow

- Probe Preparation: The thiol- and amine-modified aptamer is functionalized with Ferrocene monocarboxylic acid using EDC/NHS chemistry in 0.3 M buffer, resulting in an Fc-aptamer conjugate [26].

- Sensor Fabrication:

- A gold electrode is meticulously polished and cleaned, including incubation with piranha solution, to ensure a clean surface for aptamer immobilization [26].

- The Fc-aptamer conjugate is immobilized onto the clean gold electrode surface via the thiol-gold affinity, forming a self-assembled monolayer [26].

- Assay Procedure and Signaling Mechanism:

- In the absence of adenosine (Blank Signal): The engineered aptamer folds into a hairpin structure, creating a double-stranded region recognizable by EcoRI. Upon addition of the enzyme, this region is cleaved, releasing the Fc label from the electrode surface. This results in a suppressed blank peak current in the DPV measurement [26].

- In the presence of adenosine (Target Signal): Adenosine binding induces a conformational change in the aptamer, dissociating the double-stranded segment. The aptamer is no longer cleavable by EcoRI. When the enzyme is added, the Fc-labeled aptamer remains intact on the electrode, generating a strong, measurable peak current in the DPV [26].

- Data Analysis: The DPV peak current is used as the analytical signal. A calibration curve of current versus adenosine concentration is constructed, from which the LOD and LOQ can be derived using the standard deviation of the blank and the slope of the calibration curve [26].

Visualizing Signaling Pathways and Workflows

Signaling Mechanism of the Electrochemical Aptasensor

This diagram illustrates the "signal-on" mechanism that effectively suppresses the blank signal.

Experimental Workflow for Sensor Preparation and Measurement

This flowchart details the operational steps from probe preparation to data analysis.

The determination of LOD and LOQ is a critical step in validating electrochemical assays and other bioanalytical methods. As demonstrated, the choice of statistical methodology—whether based on standard deviation and slope, signal-to-noise ratio, or advanced graphical tools like the uncertainty profile—can significantly influence the reported sensitivity parameters. The classical standard deviation method, while simple, may lead to underestimation. The signal-to-noise ratio can yield the most optimistic values, whereas graphical strategies like the uncertainty profile provide a more comprehensive and realistic assessment of a method's capabilities by incorporating total measurement uncertainty. Researchers must therefore select their calculation approach judiciously, align it with regulatory guidelines where applicable, and transparently report the method used to ensure the reliability and comparability of data in pharmaceutical development.

Calculation Methods and Sensor Applications in Drug Development and Clinical Analysis

The determination of the Limit of Detection (LOD) and Limit of Quantification (LOQ) is a fundamental requirement in the validation of analytical and bioanalytical methods, establishing the lowest concentrations of an analyte that can be reliably detected and quantified, respectively [16]. These parameters are crucial for understanding the capabilities and limitations of an analytical procedure, ensuring it is "fit for purpose" [1] [10]. Despite their importance, the absence of a universal protocol for establishing these limits has led to varied approaches among researchers and analysts [16]. This comparative review focuses on three predominant strategies—signal-to-noise ratio, blank measurement, and calibration curve methods—within the context of electrochemical assays and bioanalytical methods. The selection of an appropriate methodology is not merely a procedural formality but a critical decision that impacts the reliability, accuracy, and regulatory acceptance of analytical data, particularly in fields such as pharmaceutical development and clinical diagnostics where electrochemical techniques are increasingly employed [18] [32].

Theoretical Foundations of LOD and LOQ

The LOD is defined as the lowest analyte concentration that can be reliably distinguished from the analytical background or blank, but not necessarily quantified as an exact value [7] [1]. In practical terms, it represents the concentration at which an analyst can state, "I'm sure there is a peak there for my compound, but I cannot tell you how much is there" [7]. In contrast, the LOQ is the lowest concentration at which the analyte can not only be reliably detected but also quantified with acceptable precision and accuracy under stated experimental conditions [7] [1]. The relationship between these parameters is hierarchical, with the LOQ necessarily equal to or greater than the LOD.

The Clinical and Laboratory Standards Institute (CLSI) guideline EP17 further refines this hierarchy by introducing the Limit of Blank (LoB), defined as the highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested [1]. The LOD is then determined in relation to the LoB, specifically as the lowest analyte concentration likely to be reliably distinguished from the LoB [1]. These conceptual definitions provide the foundation upon which different calculation methodologies are built, each with distinct statistical underpinnings and procedural requirements.

Table 1: Fundamental Definitions of Analytical Limits

| Term | Definition | Key Characteristic |

|---|---|---|

| Limit of Blank (LoB) | Highest apparent analyte concentration expected from a blank sample | Establishes the baseline noise level; 95% of blank values fall below this limit [1] |

| Limit of Detection (LOD) | Lowest analyte concentration reliably distinguished from LoB | Confirms analyte presence but not precise quantity [7] [1] |

| Limit of Quantification (LOQ) | Lowest concentration quantifiable with acceptable precision and accuracy | Meets predefined targets for bias and imprecision [7] [1] |

Methodological Approaches for LOD and LOQ Determination

Signal-to-Noise Ratio (S/N) Approach

The signal-to-noise ratio method is one of the most straightforward techniques for estimating LOD and LOQ, particularly prevalent in chromatographic and electrochemical analyses. This approach involves comparing the magnitude of the analyte signal to the background noise level of the measurement system. The LOD is typically defined as a concentration that yields a signal-to-noise ratio of 3:1, while the LOQ corresponds to a ratio of 10:1 [8].

The practical implementation involves measuring the standard deviation of the blank noise (σ) and the mean signal intensity (S) of a low concentration analyte standard. The calculation proceeds as follows:

- LOD = 3 × σ / S

- LOQ = 10 × σ / S [8]

In experimental practice, the noise can be determined from a blank injection, and modern instrumentation software often includes automated functions to "Calculate USP, EP and JP s/n" using noise centered on the peak region in blank injection [33]. A key advantage of this method is its straightforward implementation and intuitive interpretation. However, challenges include instrumental noise variability and potential interference from complex sample matrices, which may necessitate matrix-matched standards or sample preparation techniques to minimize these effects [8].

Blank Measurement and Statistical Approach

The blank measurement method, extensively detailed in the CLSI EP17 guideline, adopts a rigorous statistical framework based on the analysis of blank samples and low-concentration specimens [1]. This approach introduces the critical parameter of Limit of Blank (LoB) as a foundation for determining LOD.

The methodology involves the following steps and calculations:

- LoB Determination: Test replicates of a blank sample (containing no analyte) and calculate:

- LoB = mean~blank~ + 1.645(SD~blank~) [1]

- This establishes the threshold above which an observed signal is unlikely to come from a blank sample (assuming a Gaussian distribution).

- LOD Determination: Test replicates of a sample containing a low concentration of analyte and calculate:

- LOD = LoB + 1.645(SD~low concentration sample~) [1]

- This ensures that 95% of low concentration sample measurements exceed the LoB.

This approach is considered more statistically rigorous than the S/N method because it empirically verifies the distinction between blank and low-concentration samples. The EP17 protocol recommends testing 60 replicates for establishing these parameters and 20 replicates for verification [1]. A significant advantage is its direct assessment of the method's ability to distinguish between blank and analyte-containing samples. However, it requires substantial experimental work and may be challenging for endogenous analytes where an analyte-free matrix is difficult to obtain [1] [10].

Calibration Curve Approach

The calibration curve method, endorsed by the International Council for Harmonisation (ICH) Q2(R1) guideline, leverages statistical parameters derived from linear regression analysis of calibration data [7] [10]. This approach is widely applicable across various analytical techniques, including electrochemical assays.

The procedure involves:

- Generating a calibration curve with multiple concentrations, typically in the range of the expected LOQ.

- Performing linear regression analysis to obtain the slope (S) and the standard error of the regression.

- Calculating the parameters as follows:

- LOD = 3.3 × σ / S

- LOQ = 10 × σ / S [7]

Here, σ represents the standard deviation of the response, which can be estimated as the standard error of the regression, and S is the slope of the calibration curve [7]. The standard error is readily obtained from the regression output of most data systems, including Microsoft Excel [7]. A significant advantage of this method is its foundation in established statistical principles and minimal additional experimentation beyond routine calibration. However, the values obtained should be considered estimates until validated by injecting multiple samples (e.g., n=6) at the calculated LOD and LOQ concentrations to demonstrate they meet performance requirements [7].

Comparative Analysis of Methods

Method Comparison Table

Table 2: Comparison of LOD and LOQ Calculation Methods

| Aspect | Signal-to-Noise Ratio | Blank Measurement (CLSI EP17) | Calibration Curve (ICH) |

|---|---|---|---|

| Theoretical Basis | Instrumental signal and noise comparison | Statistical distribution of blank and low-concentration samples | Regression parameters from calibration curve |

| Key Formulas | LOD = 3 × σ / S; LOQ = 10 × σ / S [8] | LoB = mean~blank~ + 1.645(SD~blank~); LOD = LoB + 1.645(SD~low concentration sample~) [1] | LOD = 3.3 × σ / S; LOQ = 10 × σ / S [7] |

| Experimental Requirements | Blank and low-concentration sample | 60 replicates for establishment; 20 for verification [1] | Calibration curve with ~5-8 concentration levels |

| Advantages | Simple, intuitive, widely implemented in software [33] [8] | Statistically rigorous, empirically verified [1] | Uses routine calibration data, established in ICH guidelines [7] |

| Limitations | Sensitive to noise variability, matrix effects [8] | Labor-intensive, challenging for endogenous analytes [1] [10] | May provide underestimated values if not properly validated [16] |

| Best Applications | Routine analysis, chromatographic methods | Regulatory submissions, clinical diagnostics [1] | Pharmaceutical analysis, research methods [7] [16] |

Practical Implementation in Electrochemical Assays

Electrochemical biosensors have gained significant traction in clinical diagnostics and point-of-care testing due to their portability, simplicity, and reliability [18] [32]. The determination of LOD and LOQ in these systems presents unique considerations. For instance, in the quantification of ethanol in plasma using an unmodified screen-printed carbon electrode (SPCE), researchers employed a signal-to-noise approach, establishing a detection limit of 40.0 μg/mL (S/N > 3) [32]. This application highlights the importance of matrix effect management, which was addressed through a 100-fold dilution strategy to eliminate plasma matrix interference while maintaining adequate detection sensitivity [32].